Beyond the Search Bar: How LLMs are Revolutionising Retail Discovery

For decades, the “search bar” has been the gateway to online shopping. We’ve all been trained to speak “computer”—typing fragmented phrases like “blue denim jacket slim fit” and hoping the algorithm understands us.

But that era is ending. With the rise of Large Language Models (LLMs), retail apps and websites are moving away from rigid search bars and toward intelligent shopping assistants. Here is how LLMs are officially replacing traditional search and why it matters for the future of retail.

1. From Keyword Matching to Semantic Understanding

Traditional search engines look for specific words. If you type “crimson shoes” but the product description says “red sneakers,” you might get zero results.

LLMs use Semantic Search. They understand the intent and context behind your words. An LLM knows that “something warm for a London winter” implies heavy coats, thermal wear, and waterproof boots, even if those specific words aren’t in your query.

2. The Power of Natural Language Conversations

The biggest shift is the move toward natural dialogue. Instead of adjusting filters (size, colour, price) manually, users can now type complex, multi-layered requests:

“I’m going to a beach wedding in Goa next month. Find me a breathable linen outfit under ₹5,000 that looks semi-formal.”

A traditional search bar would choke on this. An LLM-powered retail app processes the location (Goa = tropical), the occasion (Wedding = formal/stylish), the material (Linen), and the budget constraint all at once to provide a curated list.

3. Hyper-Personalization at Scale

Standard search bars treat every user the same. LLMs can integrate with a user’s past behaviour, style preferences, and even their local weather to provide personalised recommendations.

-

Contextual Awareness: If a user in Delhi searches for “moisturizer” in June vs. December, the LLM can prioritise lightweight gels in summer and heavy creams in winter.

-

Style Profiles: The AI learns if you prefer “minimalist” or “bohemian” styles, filtering the entire catalogue through your unique “vibe” without you ever clicking a checkbox.

4. Reducing “No Results Found” Frustration

The “Zero Results” page is a conversion killer. LLMs virtually eliminate this. If a specific product is out of stock, the AI doesn’t just show a blank page; it explains why and suggests the next best thing.

-

Example: “We don’t have the XYZ Blender in stock, but here are three alternatives with the same 750W motor and a 2-year warranty that our customers love.”

5. Multimodal Search (Text + Image + Context)

Modern retail apps are using LLMs to bridge the gap between text and visuals. Users can upload a screenshot from Instagram and ask, “Find me something with a similar pattern but in a cotton fabric.” The LLM analyzes the image features and the text constraints simultaneously to find a match.

Why Retailers are Making the Switch

For businesses, this isn’t just a “cool feature”—it’s a massive ROI driver:

-

Higher Conversion Rates: When users find exactly what they need faster, they buy more.

-

Lower Bounce Rates: Conversational AI keeps users engaged on the app longer.

-

Better Data Insights: Retailers can see exactly what customers are asking for in their own words, providing better “voice of customer” data than simple keywords ever could.

The Road Ahead: Challenges to Overcome

While LLMs are the future, they aren’t perfect yet. Retailers must manage AI hallucinations (making up products that don’t exist) and ensure the latency (speed of response) is fast enough to keep a shopper’s attention.

Conclusion

The search bar isn’t just getting an upgrade; it’s being replaced by a digital concierge. In 2026, the brands that win won’t be the ones with the most products, but the ones that understand their customers’ needs through the power of language.

4 responses to “Beyond the Search Bar: How LLMs are Revolutionising Retail Discovery”

Leave a Reply Cancel reply

Recent Posts

-

June 4, 2026

June 4, 2026

Staff Augmentation Onboarding Checklist: Security, Tools, and Culture

-

June 3, 2026

June 3, 2026

Legacy System Modernisation with Staff Augmentation: A 2026 Blueprint

-

June 1, 2026

June 1, 2026

Healthcare & SaaS Startups: How Staff Augmentation Cuts Time-to-Market

-

May 28, 2026

May 28, 2026

The Hybrid Agile Model: In-House Teams + Staff Augmentation in 2026

-

May 26, 2026

May 26, 2026

Staff Augmentation for Digital Transformation: Beyond Just Developers

-

May 25, 2026

May 25, 2026

Staff Augmentation vs Outsourcing vs In-House Hiring: When to Use Which

-

May 22, 2026

May 22, 2026

How to Choose the Right IT Staff Augmentation Company for Your Business

-

May 20, 2026

May 20, 2026

Staff Augmentation Onboarding Checklist: The Ultimate Guide

-

May 19, 2026

May 19, 2026

Staff Augmentation Pricing Models Explained: FTE vs T&M vs Hybrid

-

May 15, 2026

May 15, 2026

AI‑Driven Development Needs AI‑Ready Talent: Here’s How Staff Augmentation Helps

-

May 13, 2026

May 13, 2026

Best Staff Augmentation Companies for Enterprise Software Modernization (2026)

-

May 8, 2026

May 8, 2026

Staff Augmentation for Fintech: Bridging Compliance, Security, and Speed

-

May 7, 2026

May 7, 2026

Technical Debt Reduction with Staff Augmentation: Fast, Safe, and Measurable

-

May 6, 2026

May 6, 2026

Why Staff Augmentation Matters for Tech Leaders in 2026

-

May 5, 2026

May 5, 2026

Best Staff Augmentation Companies for Enterprise Software Modernisation (2026)

-

May 4, 2026

May 4, 2026

What Is an AI‑Ready Talent Pool and Why It Matters in 2026

-

April 30, 2026

April 30, 2026

Why choose WitQuallis for staff augmentation?

-

April 29, 2026

April 29, 2026

Emerging tech & cloud strategy: how businesses stay ahead in 2026

-

April 28, 2026

April 28, 2026

How IoT and Azure are creating real‑time patient monitoring systems

-

April 27, 2026

April 27, 2026

Nearshoring vs offshore: why time‑zone alignment matters for Agile sprints

-

April 24, 2026

April 24, 2026

Staff Augmentation: A Complete Guide to Scaling Your Tech Team Efficiently

-

April 23, 2026

April 23, 2026

The Ultimate Guide to IT Staff Augmentation: Scaling Your Digital Success with WitQualis

-

April 22, 2026

April 22, 2026

What is Software Development? A Comprehensive Guide to the 2026 Digital Landscape

-

April 21, 2026

April 21, 2026

Boost Your Business ROI with Power BI Development Services

-

April 20, 2026

April 20, 2026

Why Hiring Python Developers Has Become Risky (and Critical)

-

April 17, 2026

April 17, 2026

Staff Augmentation: Scale Your Team Fast Without Full-Time Hires

-

April 16, 2026

April 16, 2026

Top 20 Emerging Technologies of 2026: Transform Your Business Now

-

April 15, 2026

April 15, 2026

🚀 Custom AI Development Costs in 2026: Complete Guide for Businesses

-

April 14, 2026

April 14, 2026

Overcoming IT Staff Augmentation Challenges: Scale Your Team Fast and Smart

-

April 13, 2026

April 13, 2026

Enterprise software modernisation 2026: why AI‑native refactoring and staff augmentation matter

-

April 10, 2026

April 10, 2026

AI‑native refactoring: re‑imagining legacy modernisation in 2026

-

April 9, 2026

April 9, 2026

AI-Native Refactoring: How to Modernise Legacy Systems in 2026

-

April 8, 2026

April 8, 2026

The business case for Web3: real‑world asset tokenization in real estate

-

April 7, 2026

April 7, 2026

The future of IoMT: smart hospitals in 2026

-

April 6, 2026

April 6, 2026

Cloud 3.0: beyond “lift‑and‑shift” to sovereignty

-

April 2, 2026

April 2, 2026

From filters to conversation: the shift in e‑commerce search

-

April 1, 2026

April 1, 2026

Case Studies: Migrating Legacy Enterprise Apps to Azure/AWS with IT Staff Augmentation Services

-

March 30, 2026

March 30, 2026

Why Hiring Full‑Time Quantum‑Resistant Encryption Specialists Is So Hard

-

March 27, 2026

March 27, 2026

Prompt engineering: from “nice‑to‑have” to core skill

-

March 26, 2026

March 26, 2026

The hybrid squad: reimagining teams for the AI era

-

March 25, 2026

March 25, 2026

Staff augmentation and the future of work: a new operating model

-

March 24, 2026

March 24, 2026

Why full‑time quantum‑resistant encryption specialists are so hard to hire

-

March 23, 2026

March 23, 2026

How Staff Augmentation Services Fixes Cybersecurity & Cloud Shortages

-

March 20, 2026

March 20, 2026

The Great Fortress: Modernizing Banking Apps to Combat Sophisticated AI-Driven

-

March 19, 2026

March 19, 2026

The Great Cloud Diversification: Navigating Data Residency in the Age of GDPR and the EU AI Act

-

March 18, 2026

March 18, 2026

How Staff Augmentation automotive AR Cuts Onboarding Costs in Automotive & Manufacturing | WitQualis Staff Augmentation

-

March 17, 2026

March 17, 2026

The Outcome-Based Model: How AI-Augmented Teams Are Multiplying Output | WitQualis

-

March 16, 2026

March 16, 2026

Why “Vibe Coding” and Prompt Mastery Are Now as Important as Syntax

-

March 13, 2026

March 13, 2026

The Witqualis Edge: How Agentic AI Tools Accelerate MVP Delivery by 40%

-

March 12, 2026

March 12, 2026

Beyond Copilots: The Rise of Agentic AI in the SDLC

-

March 11, 2026

March 11, 2026

Breaking the Monolith: A Definitive Guide to Using Generative AI for Microservices Migration (2026 Edition)

-

March 10, 2026

March 10, 2026

The Agentic Revolution: Why AI is Moving from "Suggesting Code" to "Executing Workflows"

-

March 9, 2026

March 9, 2026

Beyond the Search Bar: How LLMs are Revolutionising Retail Discovery

-

March 6, 2026

March 6, 2026

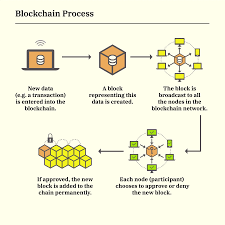

How Blockchain is Making Real Estate Investment Liquid and Accessible

-

March 5, 2026

March 5, 2026

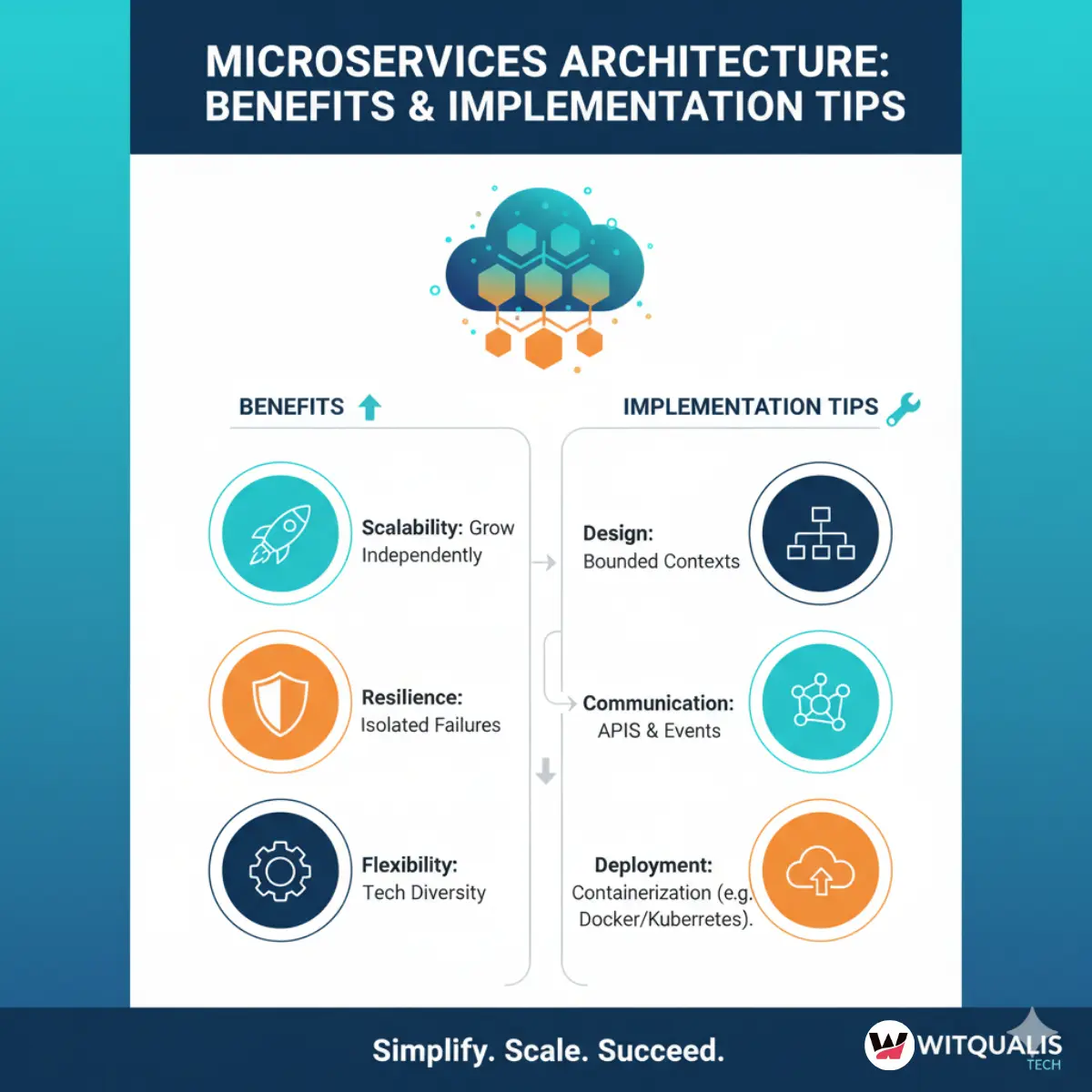

Microservices Architecture: Benefits and Implementation Tips

-

March 3, 2026

March 3, 2026

How Remote Work Has Changed the IT Industry and Tools You Need

-

March 2, 2026

March 2, 2026

API Design and Management Best Practices for Developers

-

February 27, 2026

February 27, 2026

The Impact of Internet of Things (IoT) on Modern Business

-

February 26, 2026

February 26, 2026

Virtual and Augmented Reality: Where Tech is Headed Next | 2026 Guide

-

February 25, 2026

February 25, 2026

Blockchain Beyond Cryptocurrency: Real-World Applications & Use Cases (2026 Guide)

-

February 24, 2026

February 24, 2026

The Role of AI in Enhancing IT Development: A Deep Dive (2026 Guide)

-

February 23, 2026

February 23, 2026

Maximizing Productivity: How Staff Augmentation Drives Innovation in IT Projects

-

February 20, 2026

February 20, 2026

Staff Augmentation vs. Outsourcing: Which IT Model Wins in 2026?

-

February 19, 2026

February 19, 2026

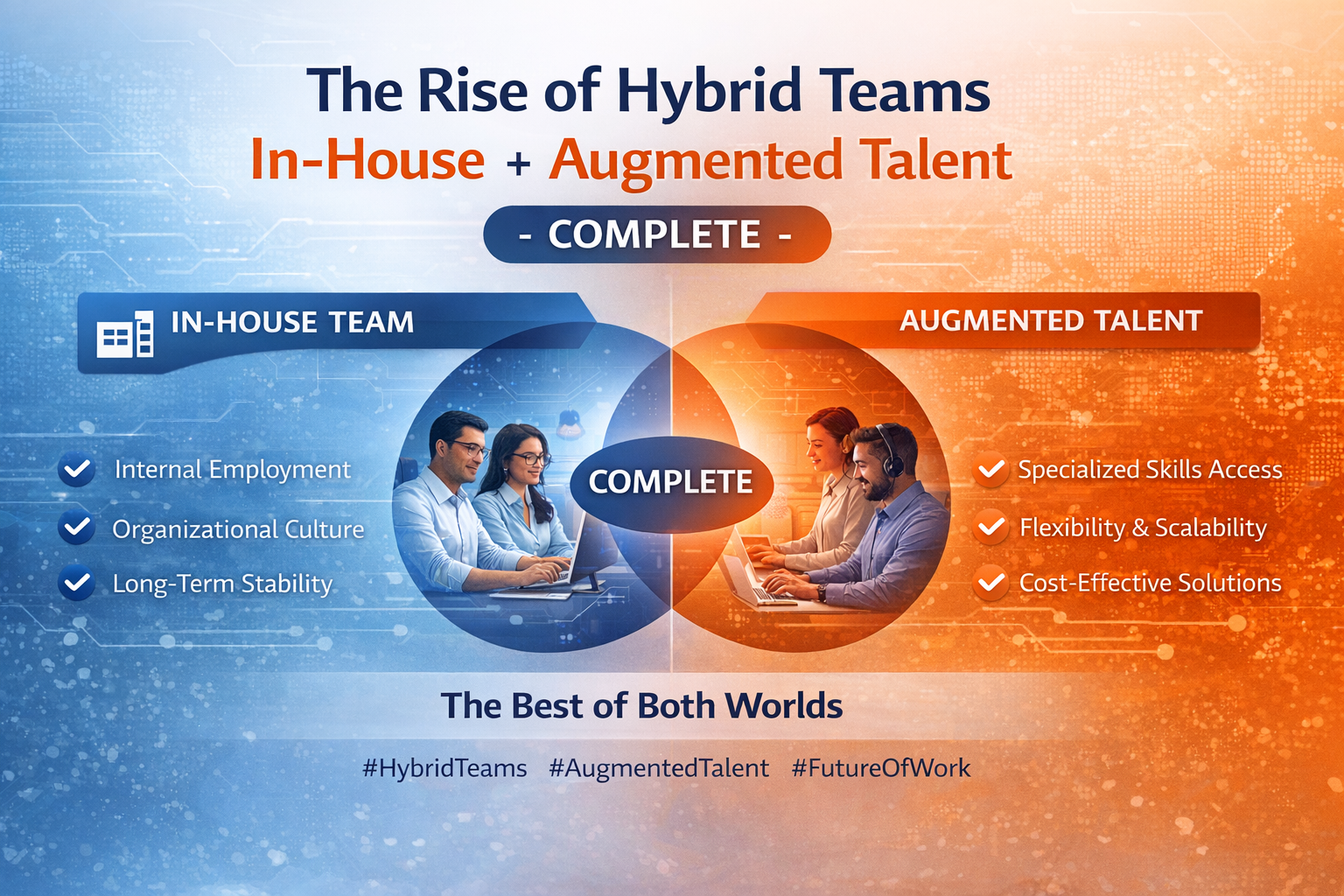

The Rise of Hybrid Teams: How to Leverage Staff Augmentation for Business Success

-

February 18, 2026

February 18, 2026

How Staff Augmentation is Transforming the Future of IT Workforce (2026)

-

February 17, 2026

February 17, 2026

5 Reasons Why Staff Augmentation is Key for Tech Skill Shortages: staff augmentation for tech skill shortages

-

February 16, 2026

February 16, 2026

How Staff Augmentation Helps Scale Your Business Without Overheads in 2026

-

February 13, 2026

February 13, 2026

The Power of Diverse Teams: How Staff Augmentation Drives Inclusion (2026)

-

February 12, 2026

February 12, 2026

The Role of 5G Technology in Shaping IT Development and Augmentation in 2026

-

February 11, 2026

February 11, 2026

The Future of Blockchain: Revolutionizing IT Services in 2026

-

February 10, 2026

February 10, 2026

Building an Authentic Employer Brand Through Staff Augmentation in 2026

-

February 9, 2026

February 9, 2026

Supporting Augmented Professionals During Projects: Best Practices for Success

-

February 6, 2026

February 6, 2026

Cost Optimization in IT: Build vs Buy vs Staff Augmentation (2026 Guide)

-

February 5, 2026

February 5, 2026

The Role of DevOps in High-Performance Engineering Teams: Best Practices Guide

-

February 4, 2026

February 4, 2026

How to Ensure Code Quality When Working with Augmented Teams: Best Practices Guide

-

February 3, 2026

February 3, 2026

Tech Talent Trends That Will Shape the Next 5 Years: 2026–2031 Outlook

-

February 2, 2026

February 2, 2026

The Rise of Hybrid Teams: In-House + Augmented Talent - The Complete Guide

-

January 30, 2026

January 30, 2026

How We Support and Retain High-Performing Tech Professionals

-

January 29, 2026

January 29, 2026

Aligning Technology Strategy with Business Growth Goals: A Complete Framework

-

January 28, 2026

January 28, 2026

What Top Developers Look for in a Staff Augmentation Partner: A Developer's Perspective

-

January 27, 2026

January 27, 2026

The Human Side of IT Services: Why People Matter More Than Code

-

January 23, 2026

January 23, 2026

Why Culture Fit Matters in Staff Augmentation Models | Witqualis

-

January 22, 2026

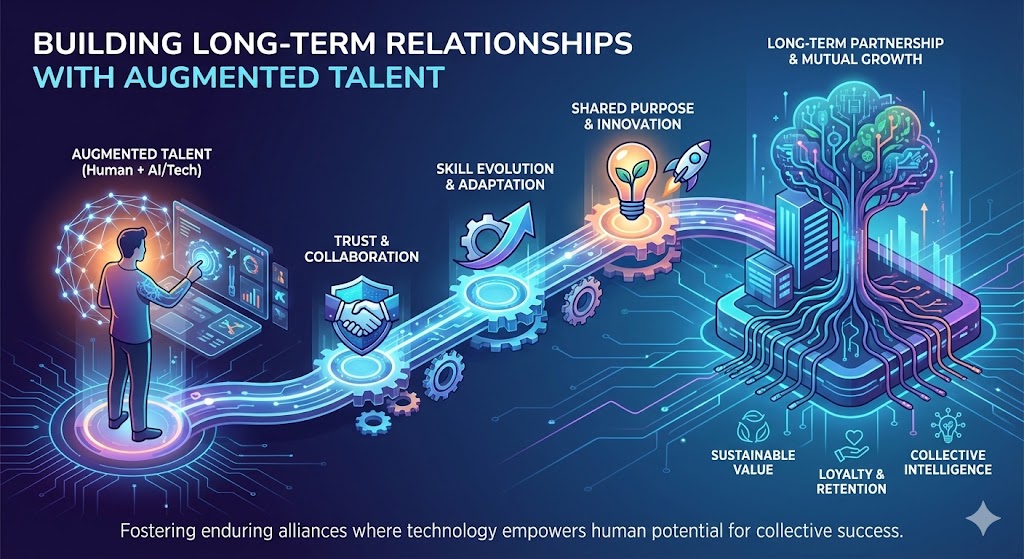

January 22, 2026

Building Long-Term Relationships with Augmented Talent: A Strategic Approach

-

January 21, 2026

January 21, 2026

Cybersecurity Skills in Demand: What Companies Need Now in 2026

-

January 20, 2026

January 20, 2026

Why Flexible Hiring Models Drive Better Business Outcomes

-

January 19, 2026

January 19, 2026

Reducing Time-to-Market with On-Demand Tech Talent

-

January 15, 2026

January 15, 2026

How Dedicated Development Teams Accelerate Product Delivery

-

January 14, 2026

January 14, 2026

Scaling Tech Teams Quickly Without Compromising Quality

-

January 12, 2026

Common Mistakes Companies Make When Outsourcing IT Projects (And How to Avoid Them)

-

January 9, 2026

Key Workforce Trends Every CTO Should Watch This Year

-

January 8, 2026

January 8, 2026

How AI Is Transforming IT Staffing and Talent Matching

-

January 7, 2026

January 7, 2026

How IT Staff Augmentation Helps Startups Scale Faster

-

January 6, 2026

January 6, 2026

Choosing the Right Tech Stack for Scalable Digital Products

-

January 5, 2026

January 5, 2026

How Remote Talent Is Reshaping Global IT Teams

-

December 29, 2025

December 29, 2025

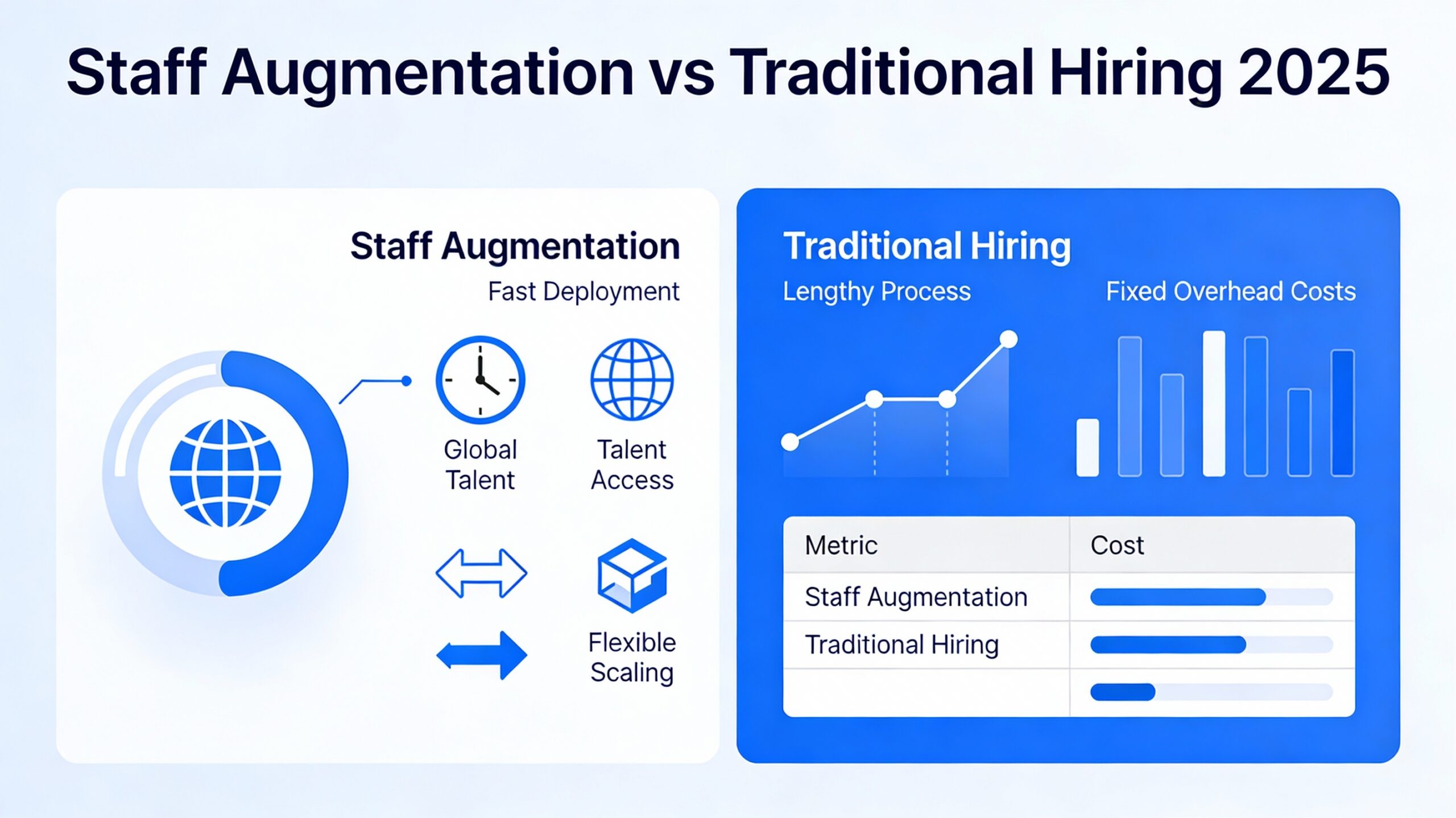

Staff Augmentation vs Traditional Hiring 2025

-

December 24, 2025

December 24, 2025

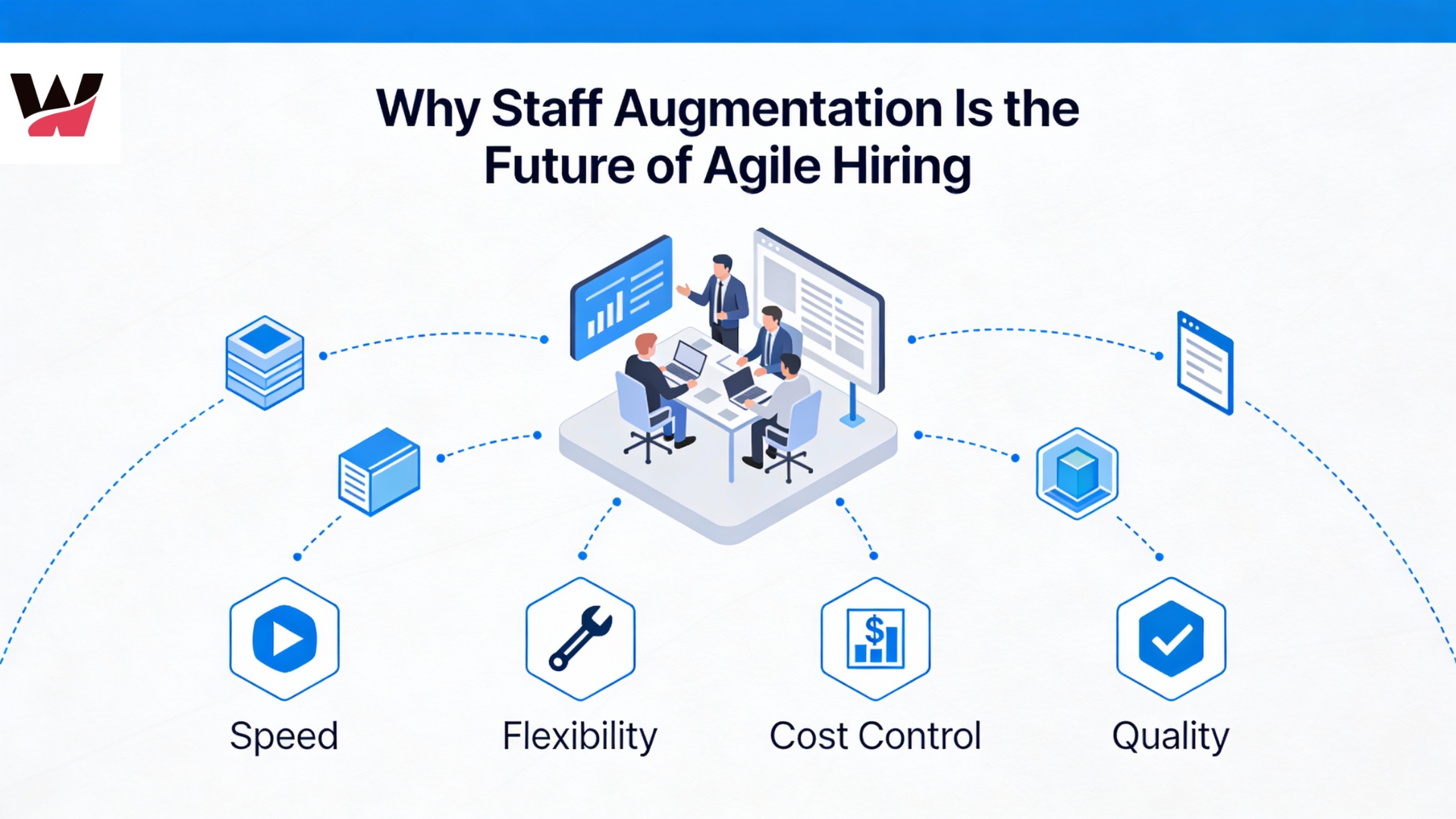

Why Staff Augmentation Is the Future of Agile Hiring

-

December 22, 2025

December 22, 2025

Why Hiring Faster Doesn’t Mean Compromising Quality — It Means Finding the Right Partner

-

December 19, 2025

December 19, 2025

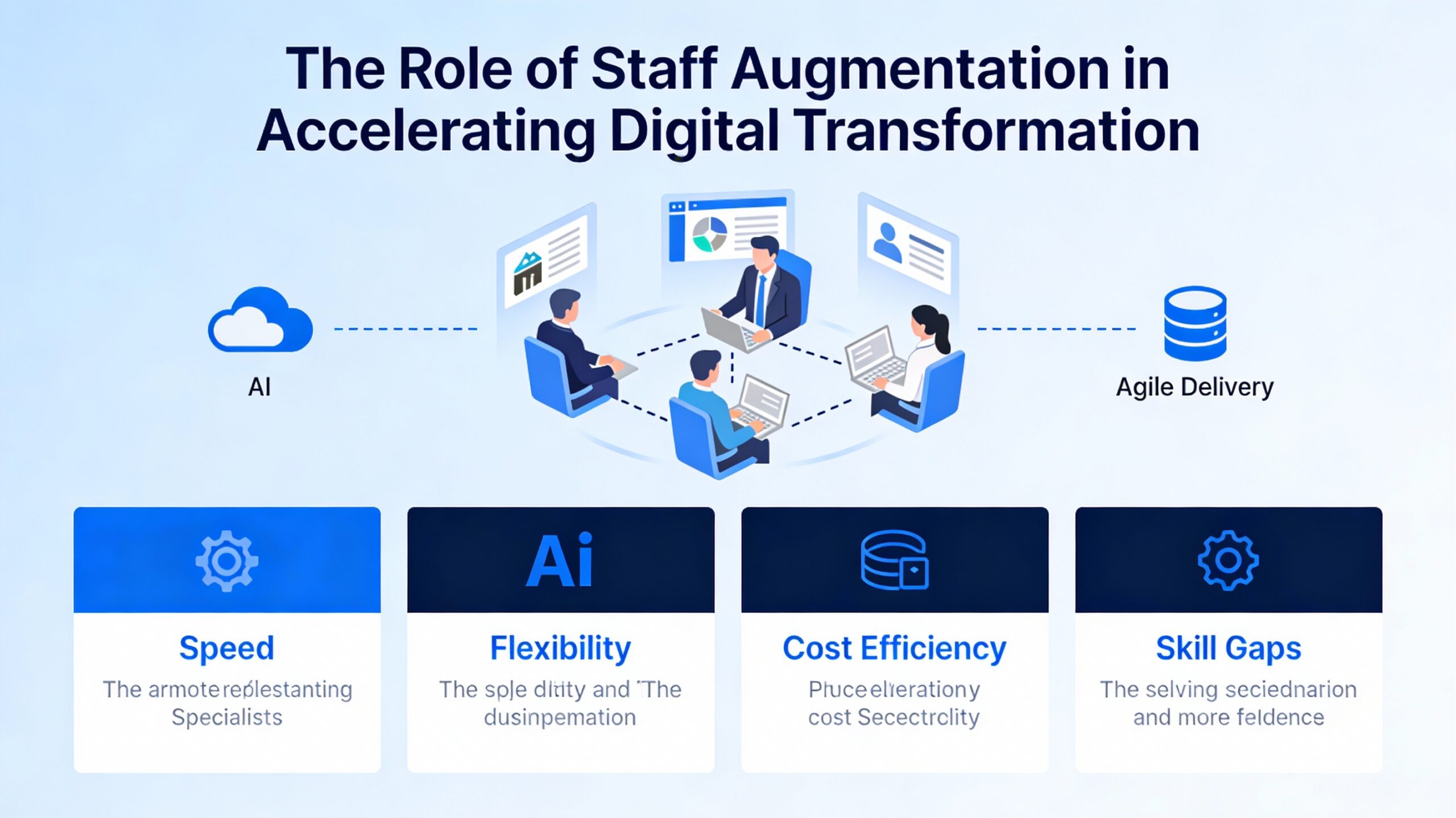

The Role of Staff Augmentation in Accelerating Digital Transformation

-

December 18, 2025

December 18, 2025

5 Tech Hiring Trends Every CTO Should Watch in 2025

-

December 17, 2025

Why Soft Skills Are the Hidden Superpower in Tech Teams

-

December 15, 2025

December 15, 2025

How WitQualis Connects Global Talent with Local Opportunities

-

December 10, 2025

December 10, 2025

Why Businesses Need Agile, Scalable, and Skilled Tech Teams—Now More Than Ever

-

December 5, 2025

December 5, 2025

The Human Side of Technology: Why People Matter as Much as Code

-

December 4, 2025

December 4, 2025

The Rise of Contract-Based Developers in the Global IT Market

-

December 3, 2025

December 3, 2025

Talent Has No Boundaries: Why the Best Teams Are Built Across Borders

-

December 2, 2025

December 2, 2025

From Startups to Enterprises: How Staff Augmentation Supports Every Stage of Growth

-

December 1, 2025

December 1, 2025

Great Ideas Need Great Execution – How WitQualis Teams Turn Vision into Reality

-

November 28, 2025

November 28, 2025

Scaling Your Tech Team Shouldn’t Slow Your Business – It Should Power It

-

November 27, 2025

November 27, 2025

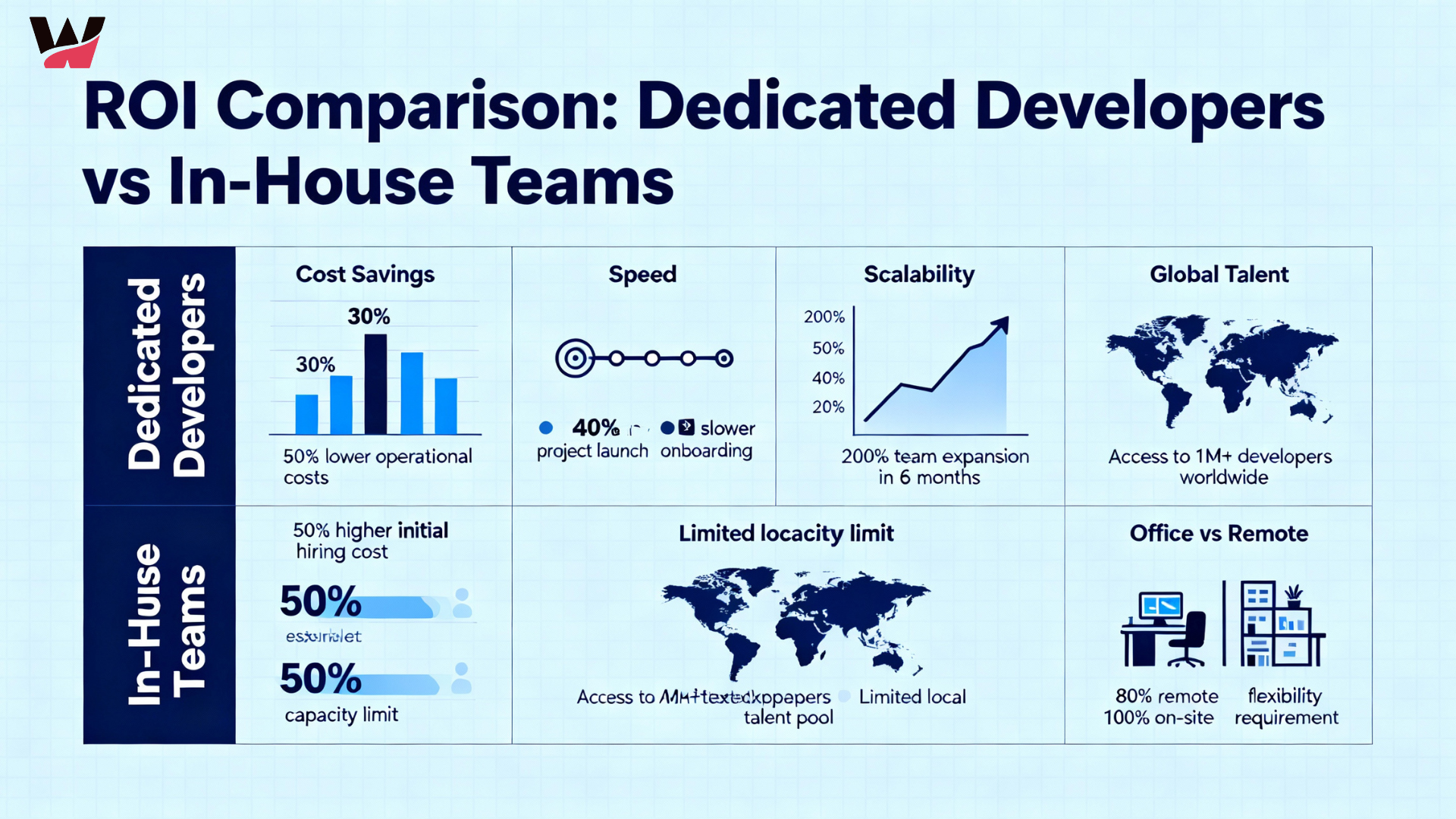

The ROI of Hiring Dedicated Developers vs In-House Teams

-

November 26, 2025

November 26, 2025

Why Hybrid Team Models Are the Future of IT Project Delivery

-

November 24, 2025

November 24, 2025

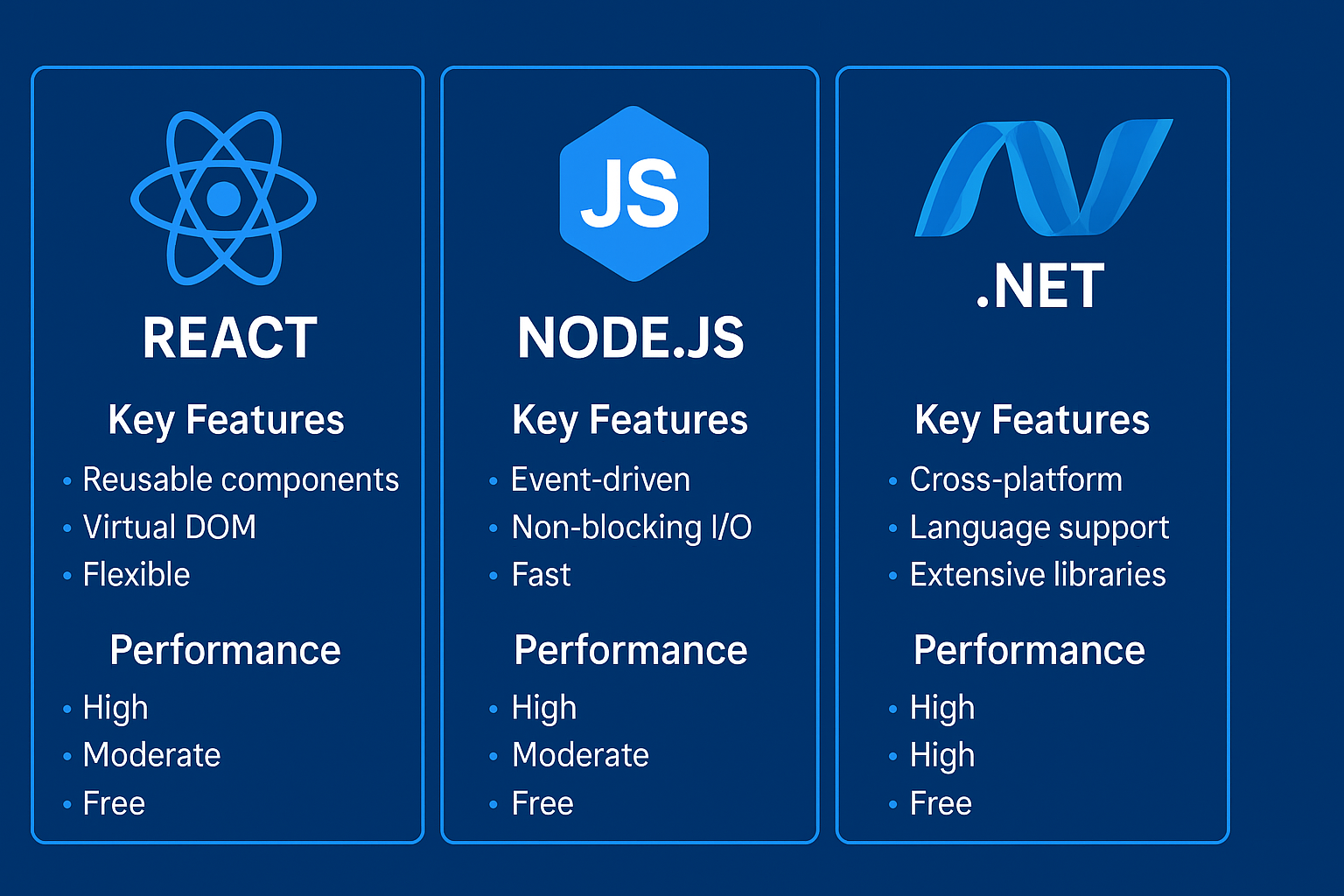

React, Node.js, or .NET? Choosing the Right Tech Stack for Your Next Project

-

November 21, 2025

November 21, 2025

Why Hiring Remote Developers Is the Smartest Move for Startups

-

November 20, 2025

November 20, 2025

How Full-Stack Developers Drive Efficiency in Modern Web Projects

-

November 19, 2025

November 19, 2025

Bridging the Skill Gap: How Augmented Teams Empower Digital Transformation

-

November 18, 2025

November 18, 2025

New Technologies for 2025: What Businesses Need to Grow

-

November 14, 2025

November 14, 2025

IT Staff Augmentation Reduces Project Costs Quality

-

November 13, 2025

November 13, 2025

Empowering Global Collaboration: How WitQualis Builds Seamless Remote Teams

-

November 12, 2025

November 12, 2025

Why Global Businesses Are Turning to India for Skilled IT Talent

-

November 11, 2025

November 11, 2025

Staff Augmentation Helps You Scale Your Tech Team

-

November 10, 2025

November 10, 2025

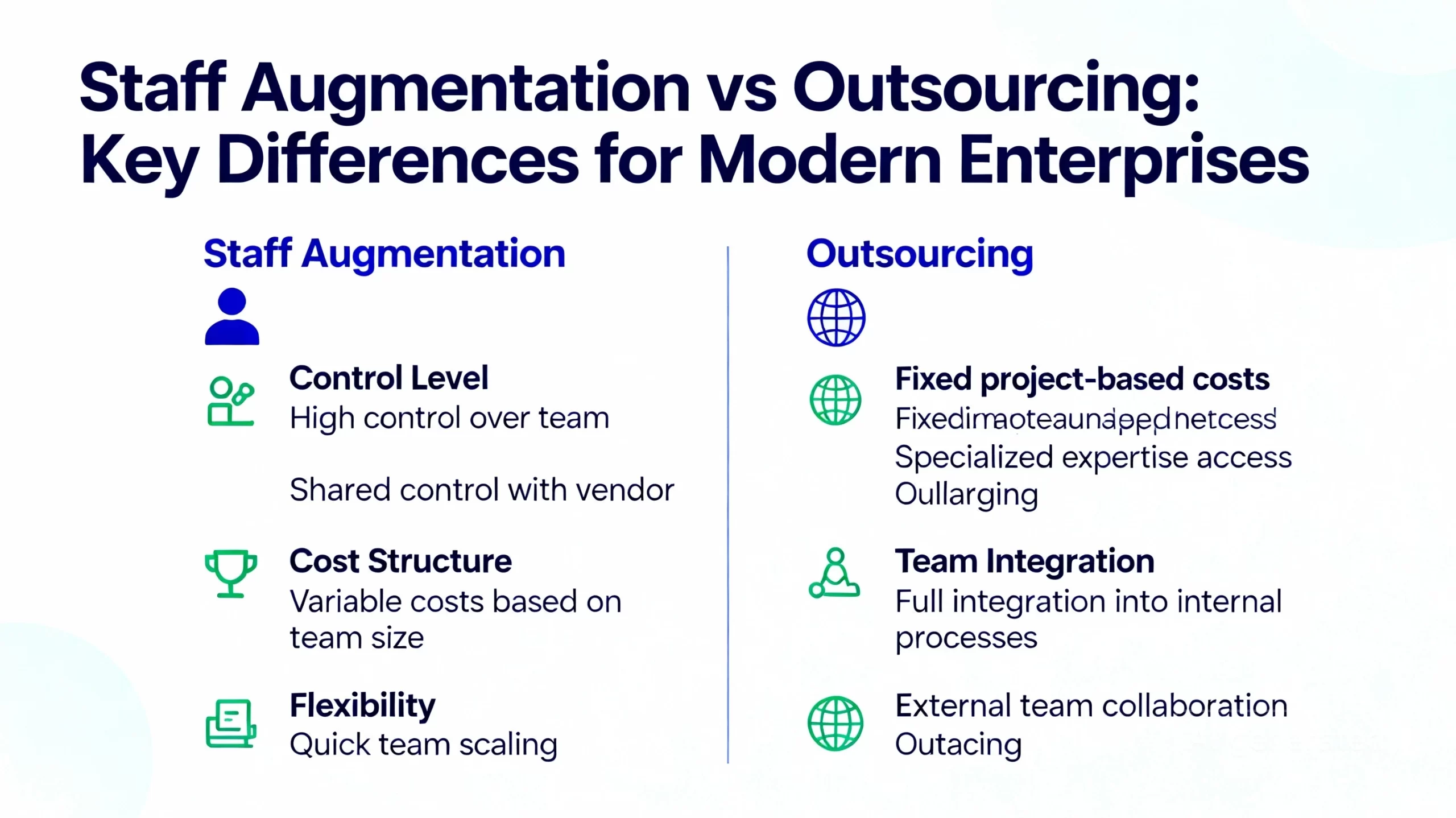

Staff Augmentation vs Outsourcing in 2025: Which Is Best for Modern Enterprises?

-

November 7, 2025

November 7, 2025

How AI, IoT, and Cloud Are Redefining Software Development in 2025

-

November 6, 2025

November 6, 2025

Scaling Smarter: How Augmented Teams Help Deliver Projects Faster in 2025

-

November 5, 2025

November 5, 2025

How AI and Automation Are Transforming Software Development Teams in 2025

-

November 3, 2025

November 3, 2025

The Future of IT Staffing: Why Businesses Are Choose Staff Augmentation in 2025

-

October 31, 2025

October 31, 2025

Why Technology is Essential for Business Growth (2025): Top Reasons & Winning Strategies

-

October 30, 2025

October 30, 2025

How the Internet of Things (IoT) Is Revolutionizing Modern Business

-

October 29, 2025

October 29, 2025

Blockchain Cryptocurrency: Real-World Applications | WitQualis

-

October 28, 2025

October 28, 2025

API Design and Management Best Practices for Developers

-

October 27, 2025

October 27, 2025

Microservices Architecture: Benefits, Implementation Tips & Real-World Examples

-

October 24, 2025

October 24, 2025

How Remote Work Has Transformed the IT Industry Tools in 2025

-

October 23, 2025

October 23, 2025

Virtual and Augmented Reality: The Tech Revolution | WitQualis

-

October 22, 2025

October 22, 2025

How AI is Revolutionizing Business Decision-Making in 2025 | In-Depth Analysis & Trends

-

October 16, 2025

October 16, 2025

Blockchain Beyond Crypto: Building Trust in Business Operations

-

October 15, 2025

October 15, 2025

How Custom Software Solutions Drive Business Efficiency | Expert Guide

-

October 13, 2025

October 13, 2025

How Automation Reduces Costs & Boosts Productivity in 2025

-

October 10, 2025

October 10, 2025

Staff Augmentation: The Key to Building Agile & Scalable Teams

-

October 9, 2025

October 9, 2025

The Role of Cloud Computing in Scaling Modern Businesses

-

October 8, 2025

October 8, 2025

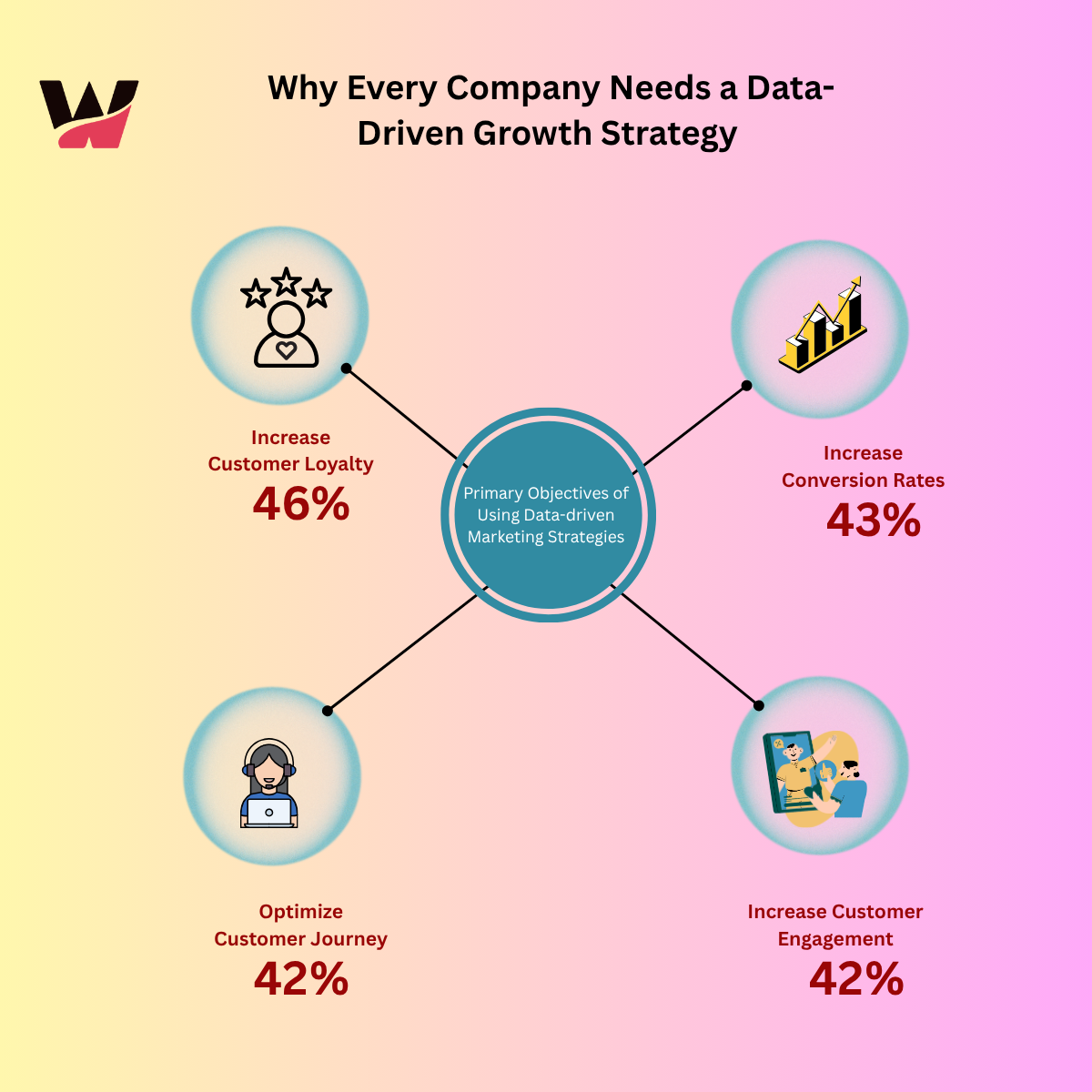

Why Every Company Needs a Data-Driven Growth Strategy

-

October 7, 2025

October 7, 2025

API Design Best Practices for Developers in 2025 |API versioning strategies

-

October 6, 2025

October 6, 2025

virtual reality vs augmented reality vs mixed reality

-

October 3, 2025

October 3, 2025

The Impact of Internet of Things (IoT) on Modern Business

-

October 1, 2025

October 1, 2025

Blockchain Beyond Cryptocurrency: Real-World Applications in 2025

-

September 30, 2025

September 30, 2025

The Future of Artificial Intelligence: Trends and Opportunities

-

September 26, 2025

September 26, 2025

Microservices Architecture: Benefits and Implementation Tips

-

September 25, 2025

September 25, 2025

Why Staff Augmentation Is a Smart Move for IT Projects in 2025

-

September 24, 2025

September 24, 2025

Cloud Computing Explained: Benefits and Best Practices (AWS, Azure, Google Cloud)

-

September 23, 2025

September 23, 2025

How Staff Augmentation Can Help Scale Your IT Team Efficiently

-

January 6, 2025

January 6, 2025

Choosing the Right Front-End Web Development Service in the UK

-

January 4, 2025

January 4, 2025

Why Choose Staff Augmentation Services in India for Your IT Projects?

-

December 21, 2024

December 21, 2024

Boost Your Business ROI with Power BI Development Services in the Maldives and Iceland

-

December 20, 2024

December 20, 2024

Get a Free Consultation: Is Your E-Commerce Business in the UAE Ready for Digital Transformation?

-

July 6, 2023

July 6, 2023

The Future of Small Business and Know-How to Climb up the Ladder

I really appreciate the focus on staff augmentation and dedicated teams—it’s a smart approach for scaling projects with specialized skills. Being able to quickly integrate developers across different technologies seems like a real advantage for businesses navigating complex tech needs.

Thanks for sharing the detailed overview of WitQualis Technologies’ services and expertise. It’s clear you specialize in a wide range of development solutions, from frontend and backend technologies to full-stack and dedicated teams—really helpful for someone looking to build or scale a tech product. The breakdown of your offerings makes it easy to see how you can support different stages of development, whether it’s MVP creation or ongoing staff augmentation.

Navigating the landscape of dedicated development teams can be complex, and I appreciate how you’ve clearly categorized the specialized skill sets available. I’m curious, how do you typically approach the onboarding process to ensure these specialized developers integrate smoothly with an existing internal team?

The emphasis on building dedicated cross-functional teams, especially those covering everything from React Native to Laravel, is a strategic approach for handling end-to-end product development. It seems that offering specific solutions like MVP creation and staff augmentation is key to helping clients navigate the complex technical landscape efficiently.