Enterprise software modernisation 2026: why AI‑native refactoring and staff augmentation matter

In 2026, enterprise software is no longer just a “support system” — it is the backbone of revenue, customer experience, and competitive advantage.

Many organisations, however, still run core operations on legacy systems built 10, 15, or even 20 years ago.

These systems are hard to change, slow to adapt, and expensive to maintain — yet they cannot be turned off without major business risk.

This is where enterprise software modernisation steps in.

It is not just about “upgrading technology”; it is about:

-

Reducing technical debt,

-

Improving resilience and security,

-

And enabling faster, AI‑driven feature delivery.

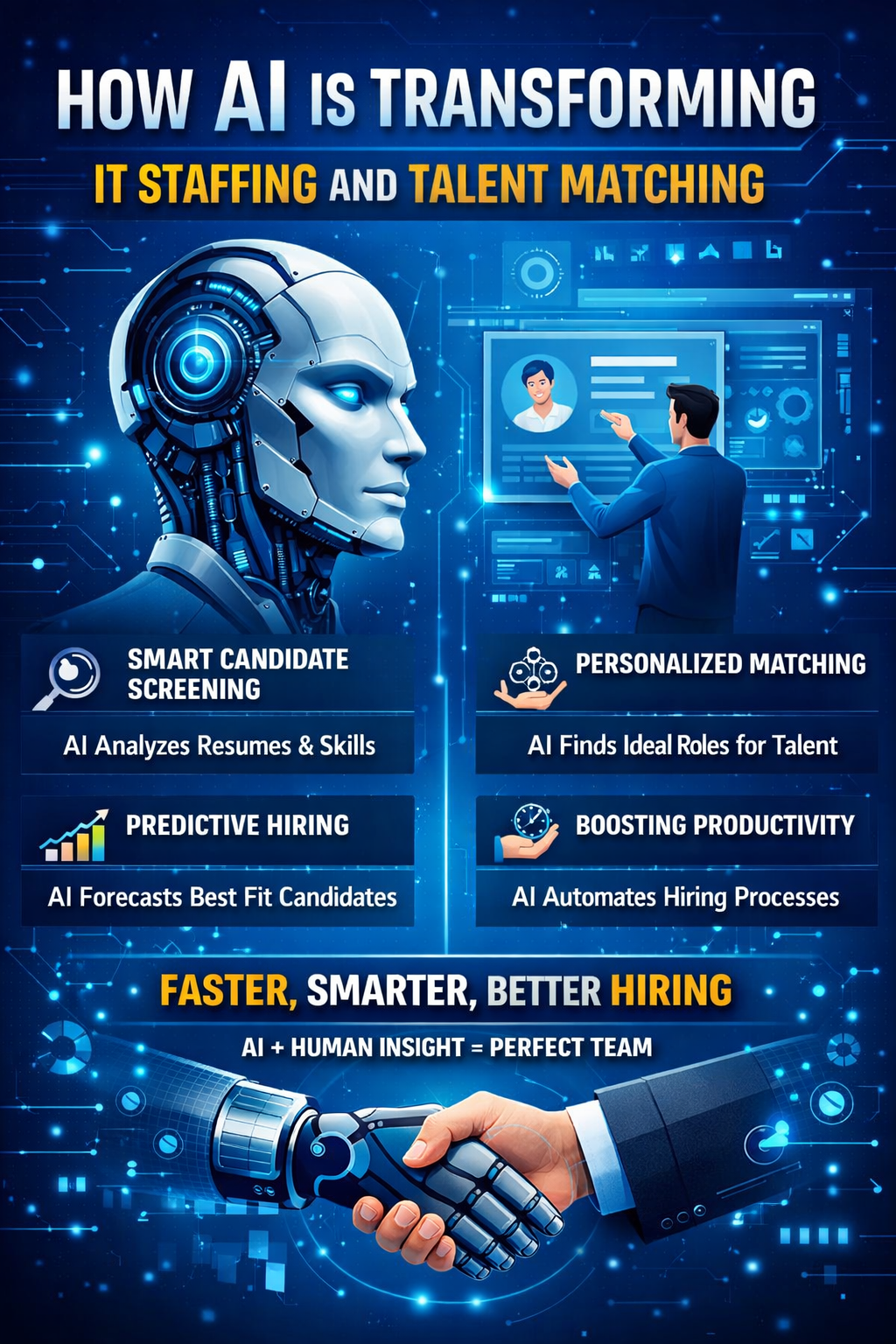

At the heart of modernisation, two forces are reshaping the game:

-

AI‑native refactoring and AI‑driven refactoring for legacy systems,

-

And staff augmentation services from experienced IT staff augmentation companies that supply the specialised skills needed at scale.

For CTOs, product leaders, and IT‑decision‑makers, 2026 is the year when legacy‑modernisation must be treated as a business‑critical programme, not a “nice‑to‑have” project.

What is enterprise software modernisation (and why it matters in 2026)?

Enterprise software modernisation defined

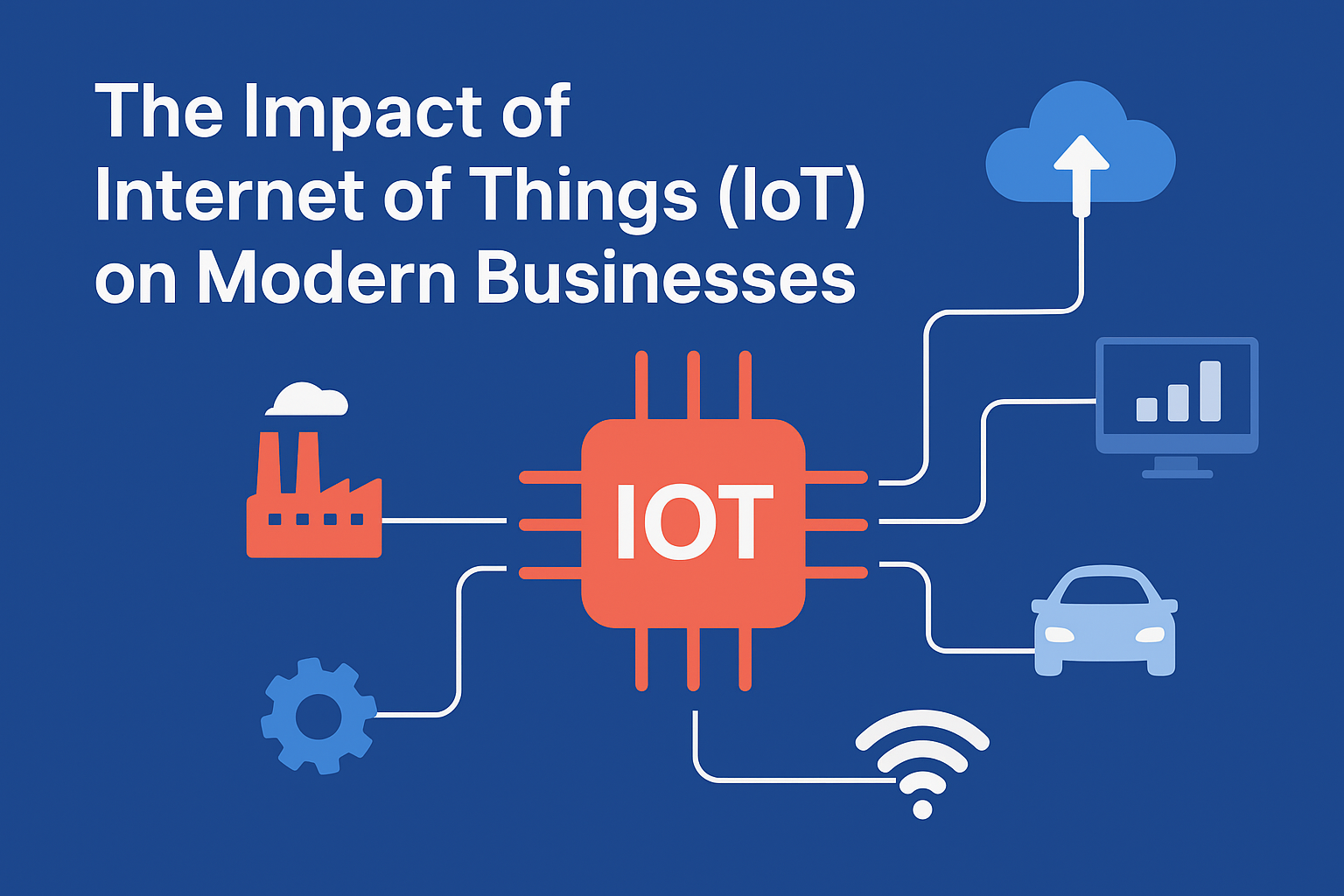

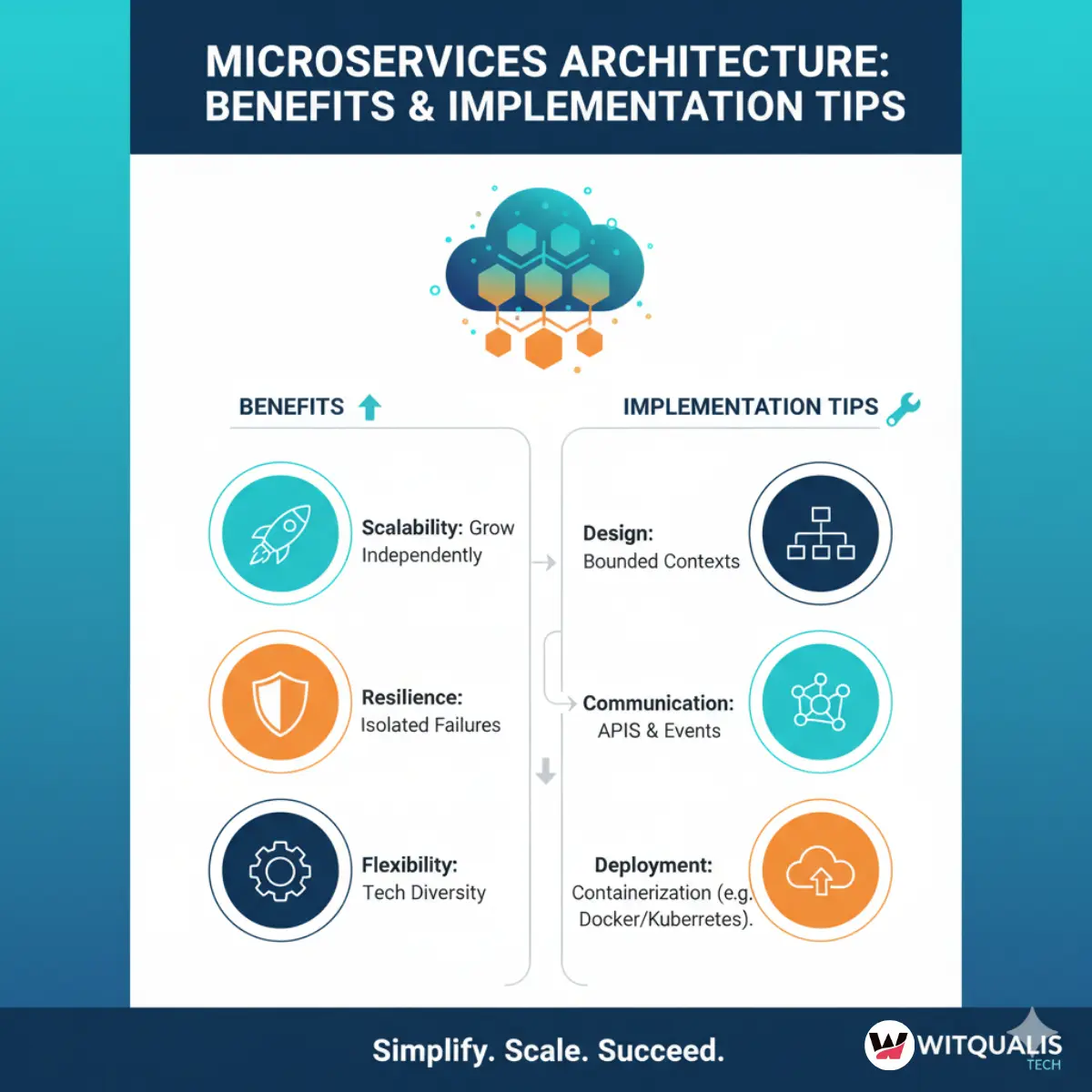

Enterprise software modernisation is the process of upgrading, re‑architecting, or rewriting legacy systems to run on modern infrastructure, with better maintainability, scalability, and security.

This can include:

-

Refactoring monolithic applications into microservices,

-

Moving legacy codebases to cloud‑native platforms,

-

Replacing outdated frameworks and libraries,

-

Introducing AI‑driven features and observability layers.

In 2026, this is not limited to “technology refresh” conversations.

Modernisation is now tightly linked to:

-

Digital transformation goals,

-

Customer‑experience improvements,

-

And regulatory and security requirements.

Imagine a bank still running core transaction processing on a 20‑year‑old monolith.

Every new product, payment channel, or compliance change requires weeks of manual work and carries a high risk of outage.

Under these conditions, the business model is constrained by the technology stack — not by market demand.

Why 2026 is a pivotal year for modernisation

Several trends have converged by 2026:

-

Higher expectations for agility and speed

-

Customers expect real‑time responses, constant innovation, and seamless digital experiences.

-

Legacy systems cannot keep pace with these expectations.

-

-

Rising AI‑native expectations

-

Enterprises are expected to integrate AI‑driven features (e.g., personalisation, predictive analytics, automated workflows) into their software stacks.

-

Legacy systems often lack the architecture and data‑access patterns needed for AI‑native refactoring.

-

-

Growing regulatory and security pressures

-

Compliance requirements (e.g., data‑protection laws, cyber‑security frameworks) are tightening.

-

Legacy systems are often harder to audit, patch, and secure.

-

-

Talent scarcity and knowledge‑loss risk

-

Many legacy systems depend on rare skill sets (e.g., classic COBOL, bespoke scripting, expired vendor tools).

-

As older engineers retire, knowledge gaps appear, increasing risk and downtime.

-

For decision‑makers in enterprises, this means enterprise software modernisation is no longer an IT‑only topic — it is a board‑level risk and growth issue.

Real‑world challenges that make legacy modernisation urgent

Business‑level pain points

-

Slow time‑to‑market

-

Feature‑delivery cycles are long because every change requires manual testing, deep domain‑knowledge, and conservative risk‑assessment.

-

Competitors with modern, cloud‑native stacks move faster.

-

-

Brittle architectures and shadow‑IT

-

New business capabilities are often built on top of legacy systems via fragile scripts, one‑off connectors, and “shadow‑systems.”

-

These workarounds become systems of record, deepening technical debt.

-

-

High operational cost and fragility

-

Legacy systems require specialised运维, frequent firefighting, and costly contingency planning.

-

Downtime can directly impact revenue and customer trust.

-

-

Difficulty integrating with AI and cloud services

-

Legacy systems often lack APIs, modern data‑access patterns, and cloud‑readiness.

-

This makes it hard to plug in AI‑driven features, analytics platforms, or SaaS products.

-

-

Security and compliance risk

-

Outdated frameworks and libraries expose organisations to known vulnerabilities.

-

Many legacy systems cannot be easily hardened or patched.

-

Imagine a manufacturing company whose core production‑planning software has not changed significantly in 15 years.

Every new factory, market, or regulatory‑requirement change requires a major engineering effort, and the business is forced to “wait for IT” instead of responding to market signals.

How AI‑native refactoring differs from traditional approaches

Traditional refactoring vs. AI‑native refactoring

Traditionally, refactoring has been a manual, human‑driven activity:

-

Engineers manually inspect the code, identify hotspots, and make incremental changes.

-

The process is slow, fragmented, and often limited to areas where engineers happen to have familiarity.

AI‑native refactoring changes this by:

-

Using AI‑driven analysis tools to scan and map the entire codebase,

-

Detecting patterns, anti‑patterns, and technical‑debt hotspots at scale,

-

Generating refactoring recommendations and transformation templates that can be applied consistently.

This means AI‑native refactoring:

-

Covers the entire legacy codebase, not just the parts that are frequently touched.

-

Surfaces hidden coupling and security‑vulnerable patterns that humans might miss.

-

Helps prioritise refactoring efforts based on business‑impact and risk.

Benefits of AI‑driven refactoring for legacy systems

-

Faster analysis and mapping

-

The entire system can be introspected in hours or days, instead of months.

-

Dependency graphs and module‑maps are created automatically.

-

-

Systematic technical‑debt reduction

-

AI‑driven tools can flag duplicate logic, tightly‑coupled components, and security‑related issues.

-

Engineers can then focus on high‑impact refactoring, guided by prioritised recommendations.

-

-

Incremental, low‑risk modernisation

-

AI‑driven refactoring is often applied in a bounded‑context way, so critical flows are not disrupted.

-

New, modern‑stack components are introduced around or beside legacy modules.

-

-

Improved observability and governance

-

After refactoring, logs, metrics, and traces are standardised.

-

Operations and compliance teams get clearer visibility into system behaviour.

-

Imagine a core banking system where AI‑driven refactoring tools have been used to:

-

Identify a massive “transaction‑engine” class that contains most of the business logic,

-

Extract it into a set of well‑defined services,

-

And introduce observability all the way through the transaction lifecycle.

The result is a system that is easier to maintain, safer to modify, and faster to extend — without a disruptive “big‑bang” rewrite.

Step‑by‑step: how to implement AI‑native refactoring and legacy modernisation

Step 1 – Define the business‑case and modernisation strategy

Before any code is changed, a clear strategy must be defined:

-

Which systems are mission‑critical and which are “nice‑to‑fix.”

-

What business‑outcomes are expected from modernisation (e.g., lower downtime, faster feature‑delivery, reduced maintenance‑cost).

At this stage, staff augmentation services can be highly valuable.

Organisations that lack internal experts in AI‑driven development and legacy modernisation can bring in AI‑native engineers via IT staff augmentation companies to:

-

Assess the current state of the legacy systems,

-

Recommend refactoring and modernisation strategies,

-

and help design a phased‑migration roadmap aligned with business‑goals.

Step 2 – Map the codebase, data‑flows, and dependencies

-

The legacy codebase is ingested into an AI‑driven analysis platform.

-

APIs, endpoints, database schemas, and configuration files are catalogued.

Visual dependency graphs are created, so teams can:

-

Understand which modules are “core” and which are “peripheral,”

-

Identify hidden integration points that are not documented.

This mapping is often repeated at regular intervals so the AI‑tools can detect drift and unexpected changes.

Step 3 – AI‑driven technical‑debt and risk‑scan

AI‑native refactoring tools:

-

Scan for code‑smells (e.g., god‑classes, long methods, duplicated blocks).

-

Flag security‑related patterns (e.g., hard‑coded credentials, unvalidated inputs).

-

Highlight areas with low test‑coverage or high‑churn‑but‑low‑documentation.

Engineers then:

-

Prioritise “hot‑spots” by business‑impact and risk,

-

Decide which modules will be re‑architected and which will be replaced outright.

Step 4 – Design AI‑assisted refactoring patterns

Instead of guessing how to change thousands of files, AI‑native refactoring relies on pattern‑based transformation:

-

Common patterns might include:

-

“Extract shared logic into a service,”

-

“Replace this legacy data‑access layer with an ORM‑style abstraction,”

-

“Wrap these APIs in a facade so the legacy component can be swapped later.”

-

These patterns are encoded as scripts or templates so they can be applied consistently across the codebase.

Step 5 – Incremental execution and testing

Refactoring is done in small, safe increments:

-

One bounded‑context at a time,

-

One pattern at a time.

Automated tests are run before and after each change.

In AI‑driven environments, AI‑assisted testing tools:

-

Generate boundary‑case test‑data,

-

Predict likely regression points,

-

and help ensure that refactored components still behave correctly.

Step 6 – Observability and governance

Once modernised components are live:

-

Logs, metrics, and traces are standardised.

-

Key business‑level indicators (e.g., transaction latency, error‑rate) are monitored.

AI‑driven dashboards can:

-

Flag performance‑degradation after a refactoring sprint,

-

Suggest rollback or rollback‑points.

Benefits of AI‑driven refactoring and modernisation for enterprises

Business‑level benefits

-

Lower technical debt and faster delivery

-

Clearer architecture and dependencies reduce “time‑to‑understand” for new developers.

-

Delivery‑velocity increases as the system becomes less fragile.

-

-

Improved security and compliance posture

-

Known‑vulnerable patterns and outdated libraries are systematically identified and replaced.

-

Audit‑trails and observability make it easier to demonstrate compliance.

-

-

Higher engineer‑productivity and morale

-

Working on a cleaner, better‑structured codebase is less stressful and more rewarding.

-

Onboarding is faster because dependencies and flows are better documented (often with AI‑generated docs).

-

-

Scalability and future‑proofing

-

Modernised systems can be more easily integrated with AI‑driven features, cloud‑services, and SaaS products.

-

Growth‑driven changes (e.g., adding new markets, payment‑gateways, or regulatory‑flows) become less painful.

-

Imagine a healthcare platform that has been AI‑natively refactored.

New patient‑engagement features can be added in weeks instead of months, and every new developer can understand the core logic in a fraction of the time that was required before.

Risks and challenges (and how to mitigate them)

Common risks in AI‑native refactoring

-

Over‑automation and loss of human judgment

-

Not every refactoring suggestion from an AI tool is safe or desirable.

-

Business‑logic nuances may be missed if AI outputs are treated as commands instead of guidance.

-

-

Regression and unintended side‑effects

-

Aggressive pattern‑based refactoring can unexpectedly change behavior.

-

Business‑critical workflows may break if edge‑cases are overlooked.

-

-

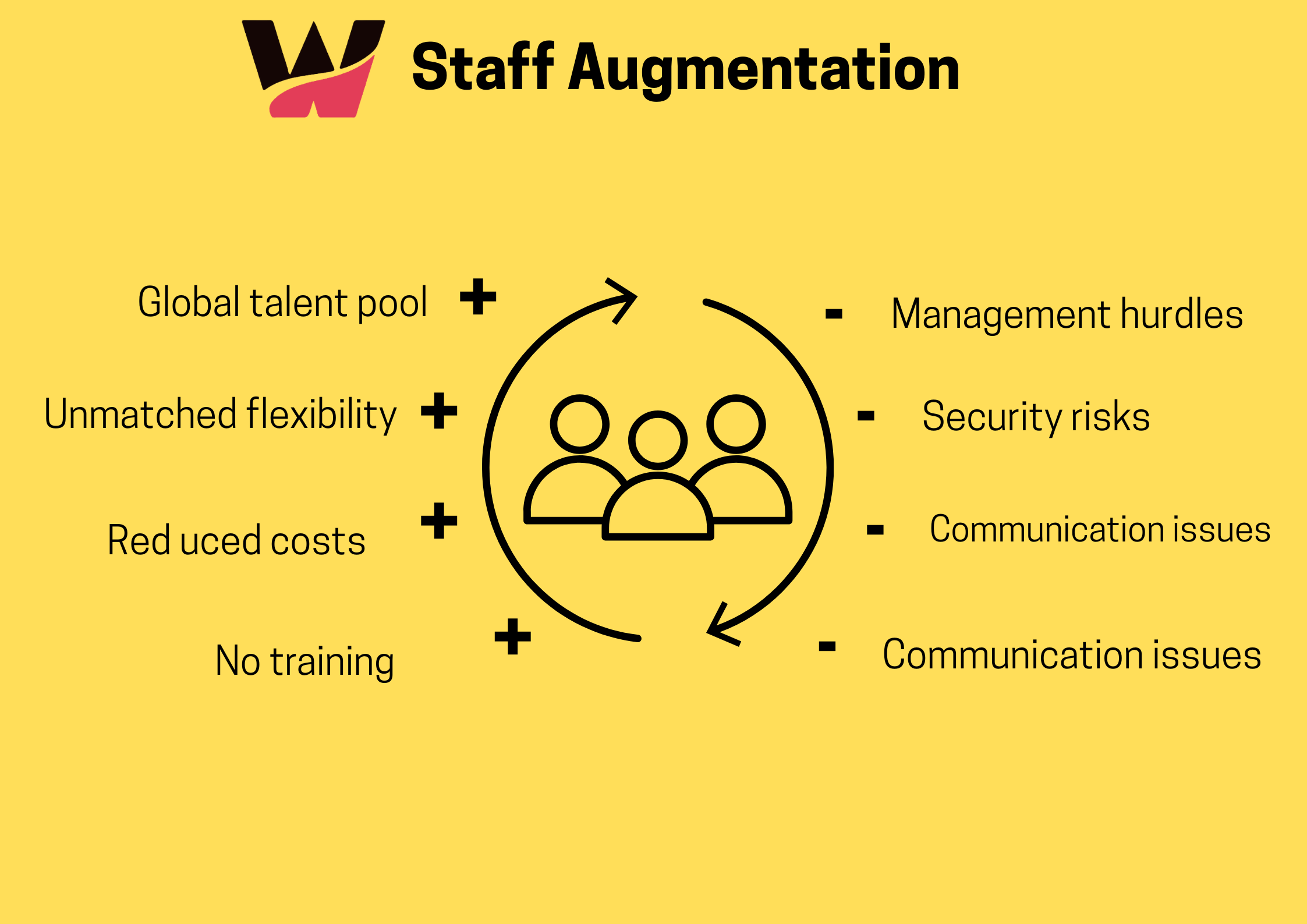

Knowledge‑transfer and ownership gaps

-

If refactoring is done by temporary experts (via staff augmentation), permanent teams may not fully understand the new architecture.

-

How to mitigate these risks

-

Keep humans in the loop

-

AI‑tools should be treated as “advisors,” not “autonomous‑refactoring bots.”

-

Critical changes should be peer‑reviewed and step‑by‑step‑approved.

-

-

Invest in testing and observability

-

Comprehensive test‑suites, both unit and integration‑level, should be maintained.

-

Real‑time monitoring should be in place before and after refactoring.

-

-

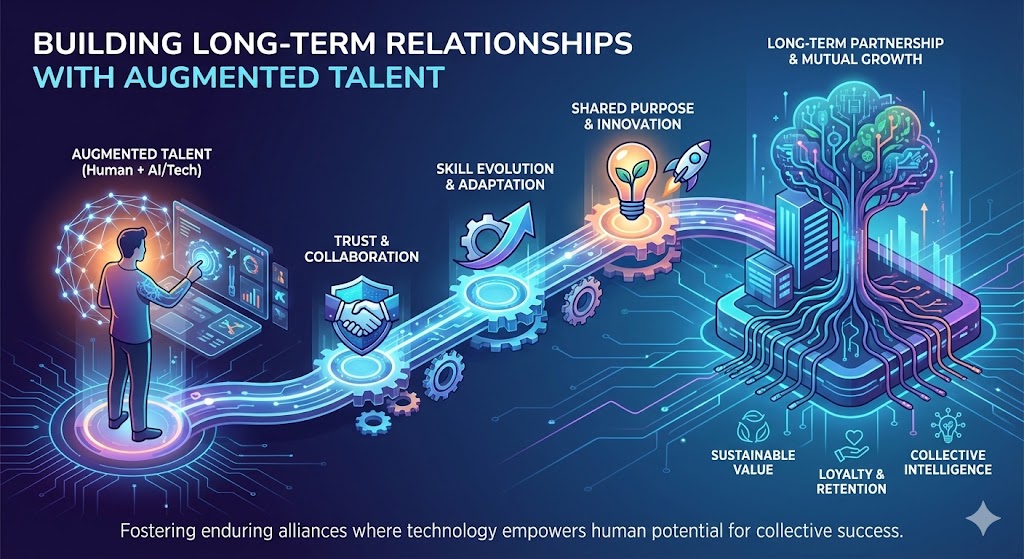

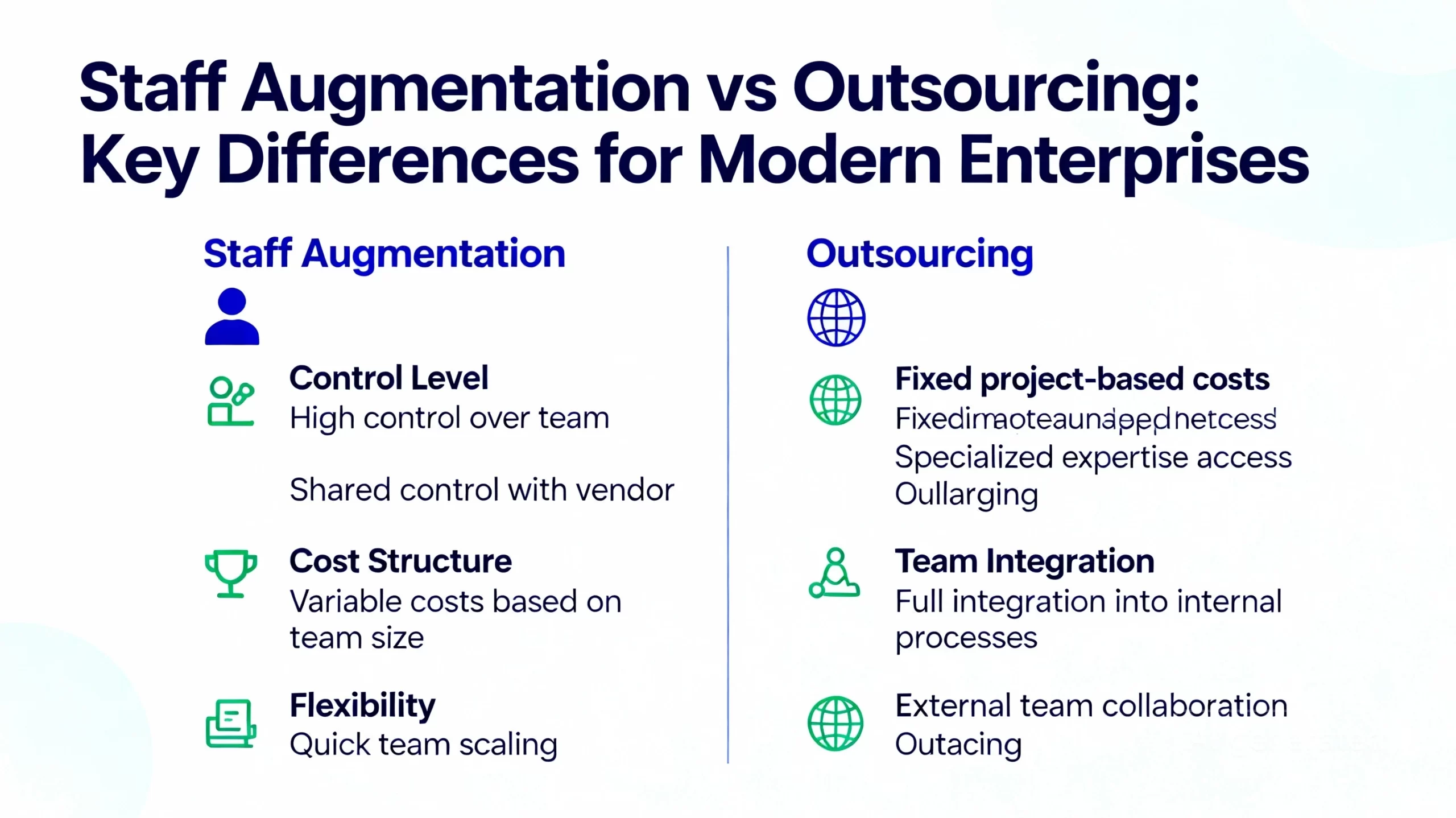

Structure staff augmentation as a knowledge‑building engagement

-

When IT staff augmentation companies provide AI‑native engineers, the engagement should be designed to transfer skills and understanding to internal teams.

-

Pair‑programming, documentation‑sprints, and post‑sprint knowledge‑handover sessions are highly effective.

-

Case‑style examples and scenarios

Example 1 – Banking core‑processing legacy system

A commercial bank runs its core‑transaction‑processing on a 20‑year‑old monolith.

The business is:

-

Unable to add new payment‑channels quickly,

-

Constantly worried about outage‑risk during peak‑load periods.

An AI‑native refactoring engagement is launched:

-

The AI‑analysis phase maps transaction‑lifecycle, data‑stores, and integrations.

-

Technical‑debt hotspots (e.g., a single massive “transaction‑engine” class) are flagged.

-

A phased‑refactoring plan is created, with high‑risk payment‑flows staying legacy‑compatible behind a modern‑API‑layer.

Over 18 months, the core‑system is gradually modernised, with AI‑driven tools:

-

Helping to extract services,

-

Guiding tests,

-

and ensuring that all business‑level SLAs are preserved.

Example 2 – E‑commerce platform with legacy tax‑engine

An e‑commerce platform has a legacy tax‑computation engine that is hard to maintain.

New markets and VAT rules cannot be added quickly enough.

AI‑native refactoring is used to:

-

Decompose the tax‑engine into a rule‑based service,

-

Replace handwritten logic with a configurable rules‑engine schema,

-

And keep the legacy implementation as a fallback for a short transition.

The result is:

-

Faster time‑to‑market for new markets,

-

Lower risk of tax‑calculation errors,

-

and a cleaner integration surface for future AI‑driven pricing or promotional engines.

These examples are not theoretical — they mirror real‑world legacy application modernisation initiatives that are being executed with AI‑native tools in 2026.

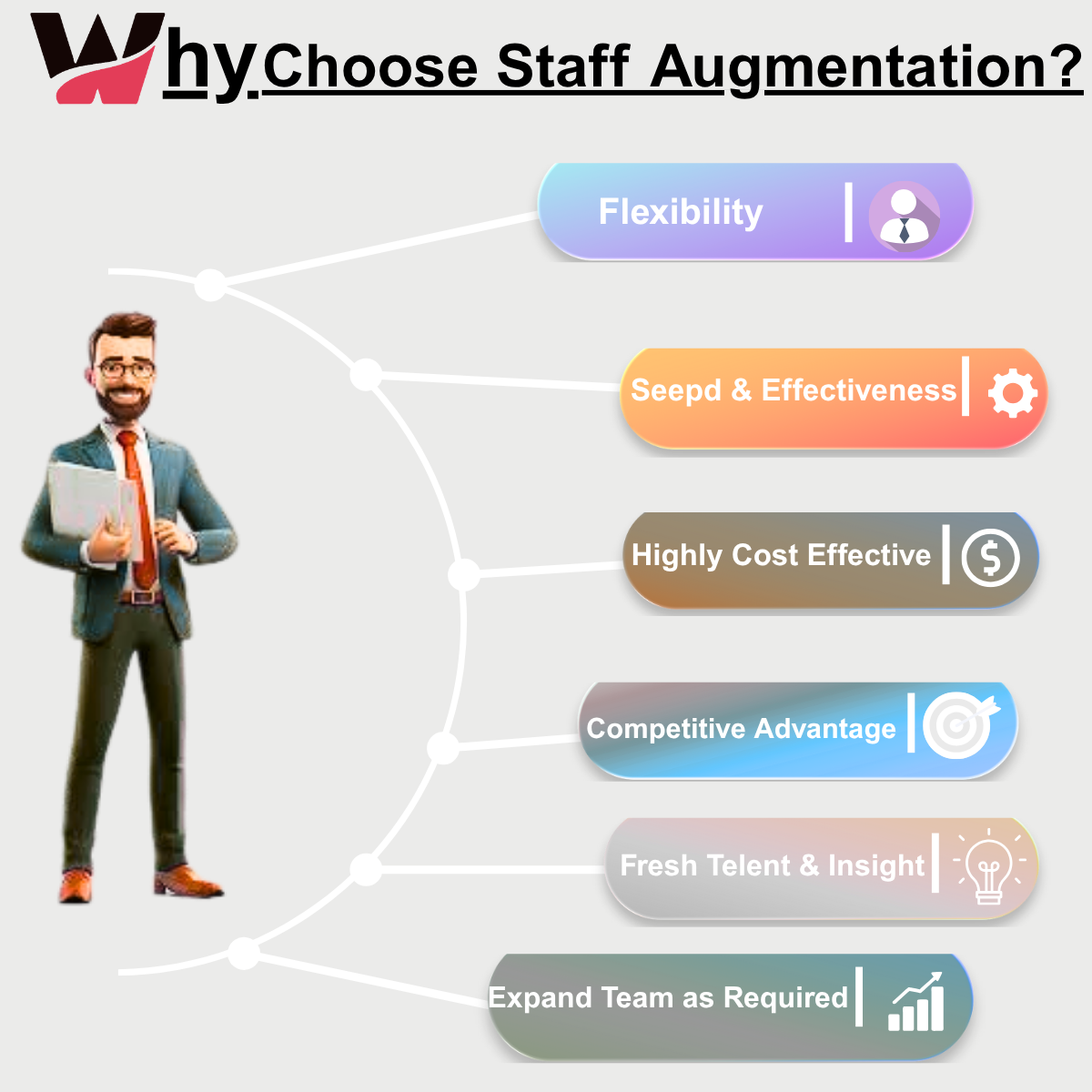

How staff augmentation services fit into AI‑native refactoring and modernisation

Staff augmentation for AI‑driven development and modernisation

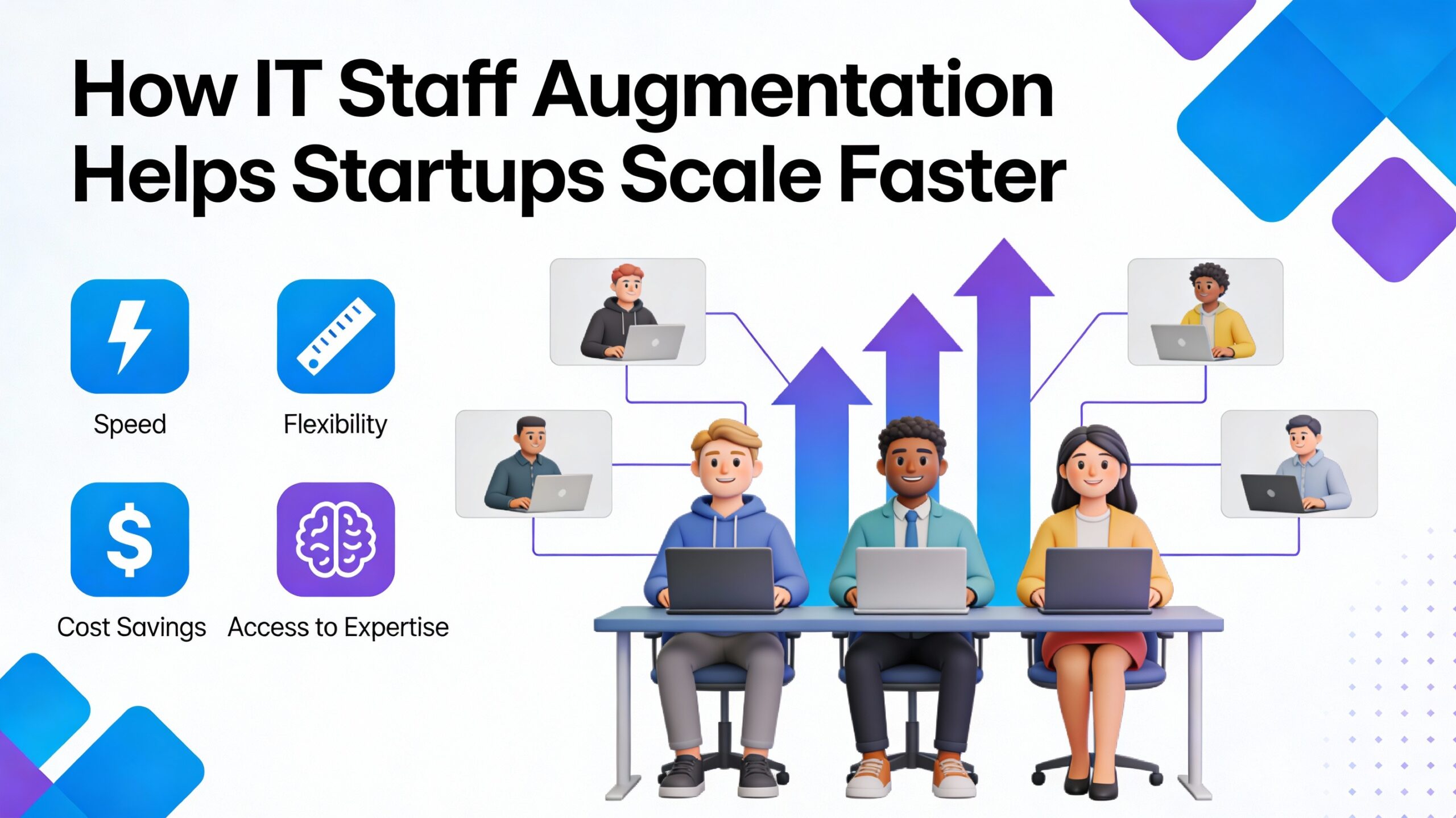

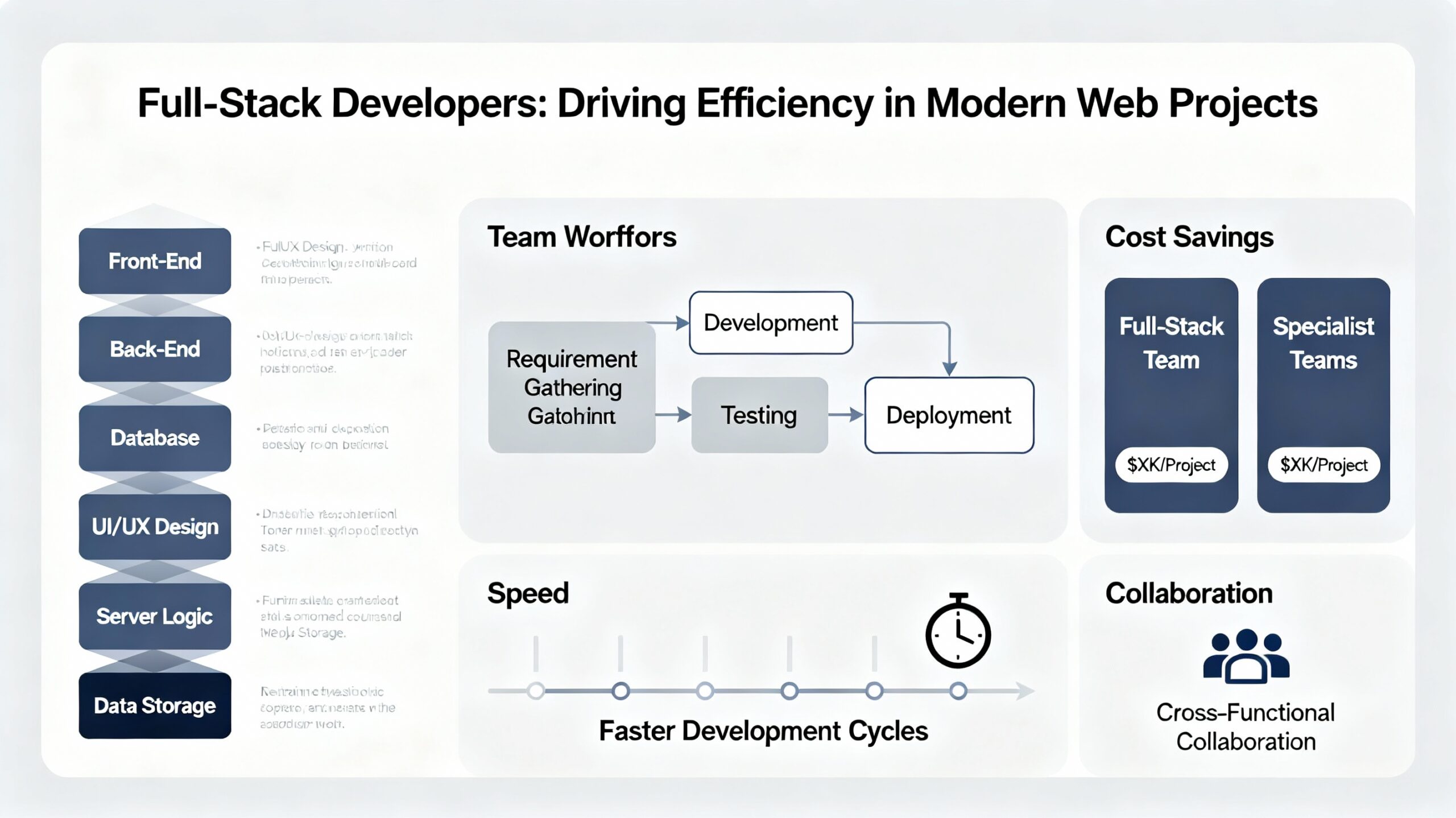

AI‑native refactoring and enterprise software modernisation are not one‑team‑tasks.

They require:

-

AI‑engineers and data‑scientists who can work with code‑analysis tools and AI‑driven refactoring patterns.

-

Legacy‑modernisation specialists who understand old frameworks and design patterns.

-

Cloud‑engineers to design and implement modern‑architecture foundations.

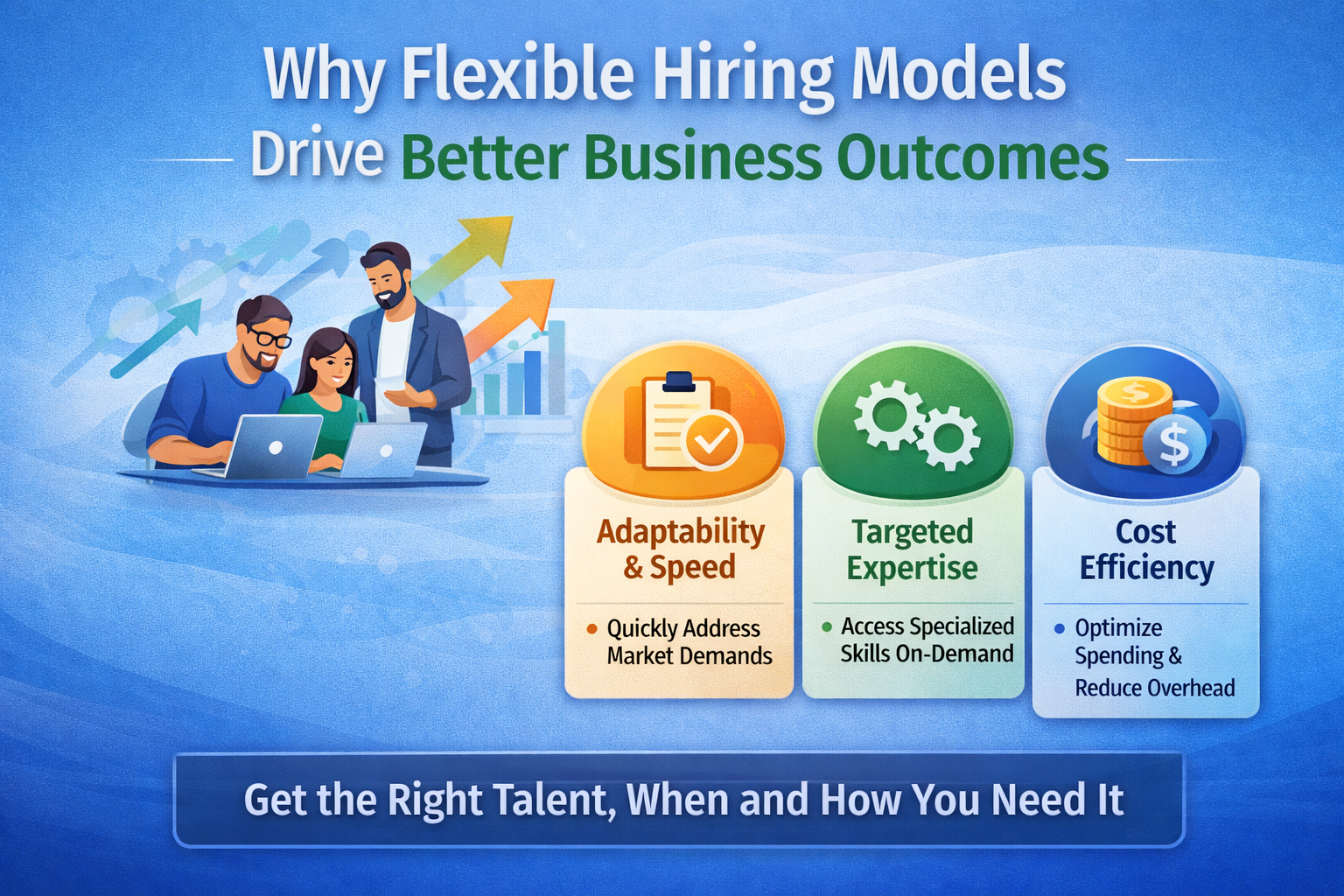

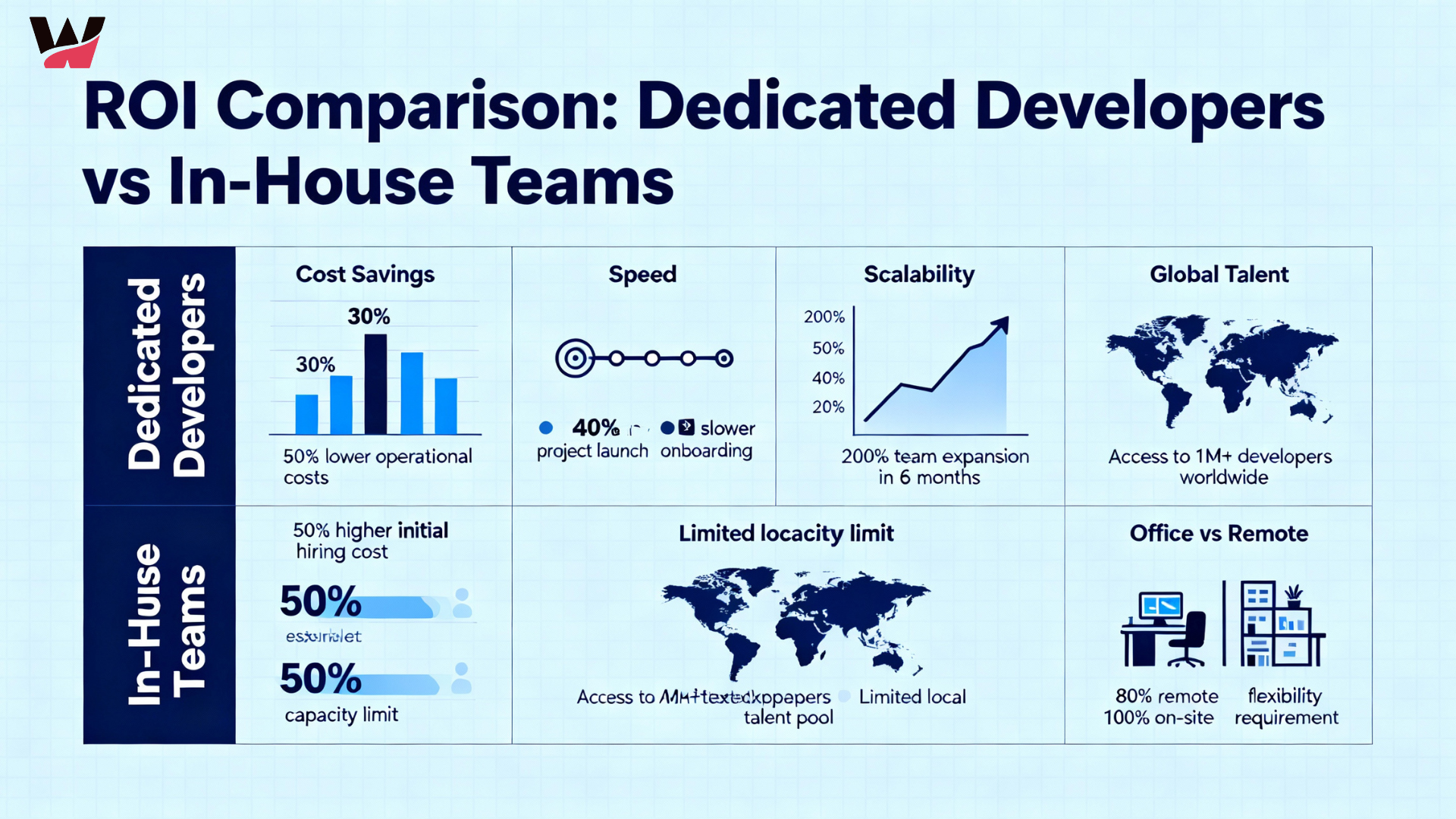

Most organisations cannot or should not hire all of this talent permanently.

That’s where staff augmentation services become essential.

Through IT staff augmentation companies, CTOs can:

-

Temporarily scale up AI‑native engineers, refactoring‑specialists, and cloud‑migration experts.

-

Embed them into existing product and engineering teams.

-

Release or redeploy them once the platform is stabilised.

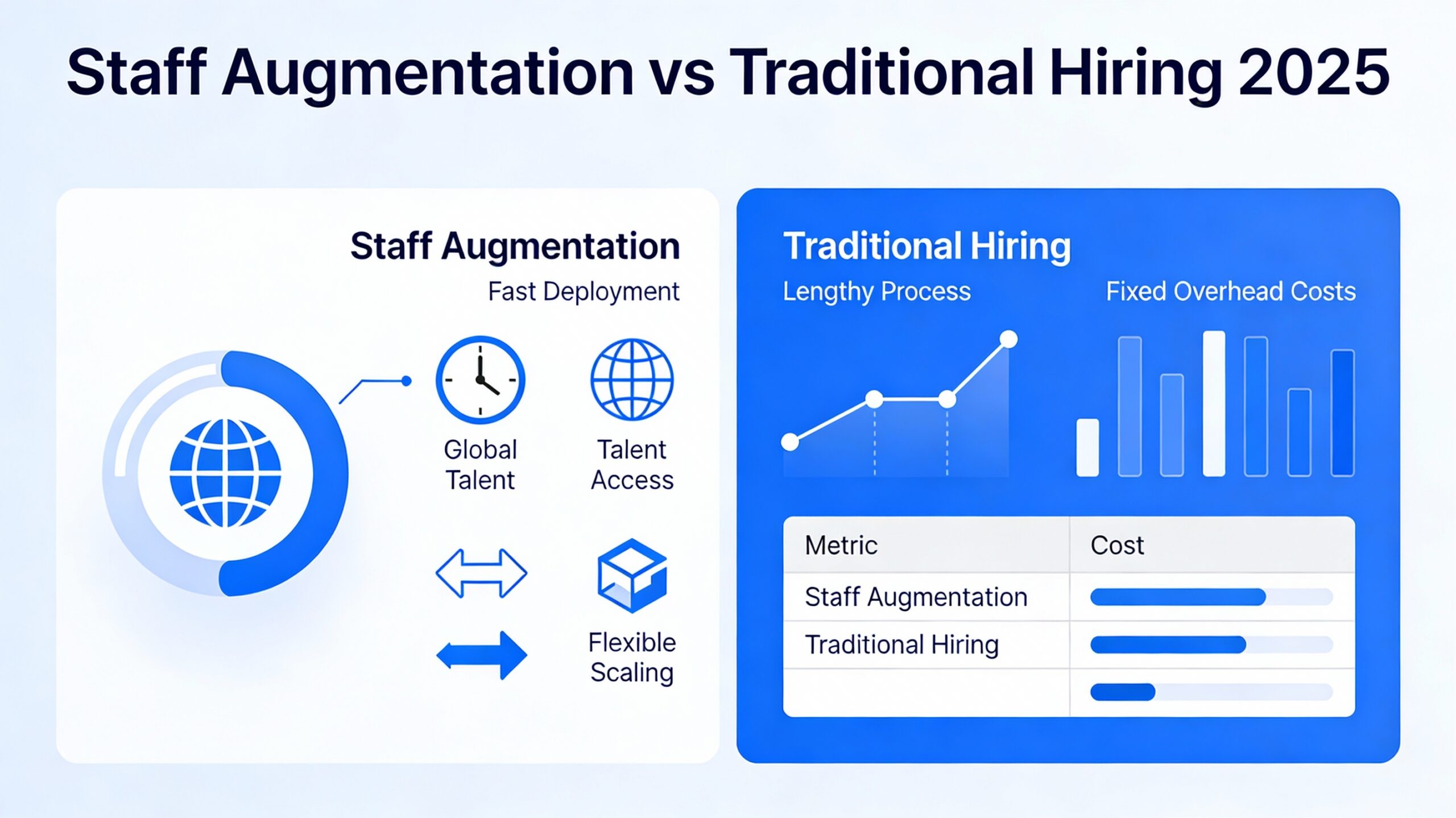

Staff augmentation process aligned with AI‑native modernisation

A well‑run staff augmentation process for AI‑driven legacy‑modernisation projects typically includes:

– Needs assessment and scope‑definition

-

Business and product teams define:

-

Which systems are priority candidates for AI‑native refactoring.

-

What business‑outcomes are expected (e.g., reduced downtime, faster feature‑delivery).

-

-

Engineering and architecture teams assess:

-

Current stack, risk‑surface, and data‑flow complexity.

-

– Role definition and sourcing

-

Required skills are mapped:

-

AI‑engineers with experience in code‑analysis and AI‑driven refactoring,

-

Legacy‑modernisation experts,

-

Cloud‑architects and DevOps engineers.

-

-

IT staff augmentation companies are engaged to:

-

Source and vet specialists with relevant experience.

-

Ensure they can work under the client’s governance and tools.

-

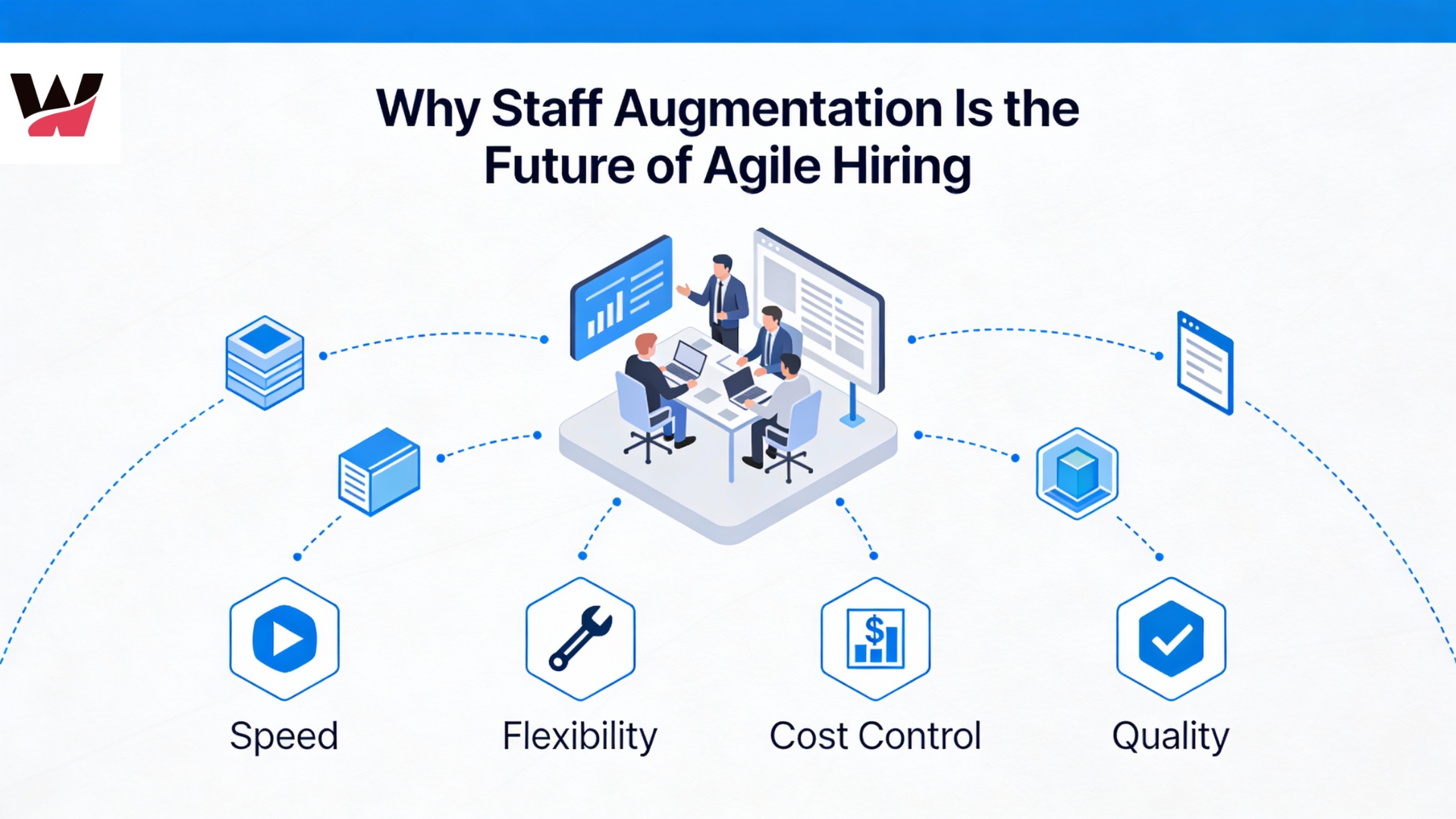

– Embedded delivery and AI‑native refactoring

-

Augmented staff are embedded into product and engineering squads.

-

They work alongside permanent teams to:

-

Run AI‑driven code‑analysis,

-

Design and execute refactoring patterns,

-

Build modern‑architecture foundations.

-

– Testing, validation, and go‑live

-

AI‑driven testing tools help validate regressions and edge‑cases.

-

Real‑world traffic‑testing and observability ensure that modernised components behave correctly.

– Knowledge transfer and long‑term governance

-

Once the platform is stable, augmented staff may be scaled back.

-

Internal teams are trained in:

-

Maintaining AI‑natively‑modernised systems. blog

-

Call‑to‑Action (CTA) and internal links

If your organisation is ready to tackle enterprise software modernisation 2026 with AI‑native refactoring, consider partnering with Witqualis.

-

Explore how staff augmentation services can scale up AI‑native and legacy‑modernisation talent.

-

Learn how connect with US staff augmentation work.

-

Discover AI‑driven development solutions that complement staff augmentation and legacy modernisation.

Enterprise software modernisation in 2026 is not a “if‑we‑have‑time” project — it is a strategic necessity for any organisation that wants to stay competitive, secure, and fast.

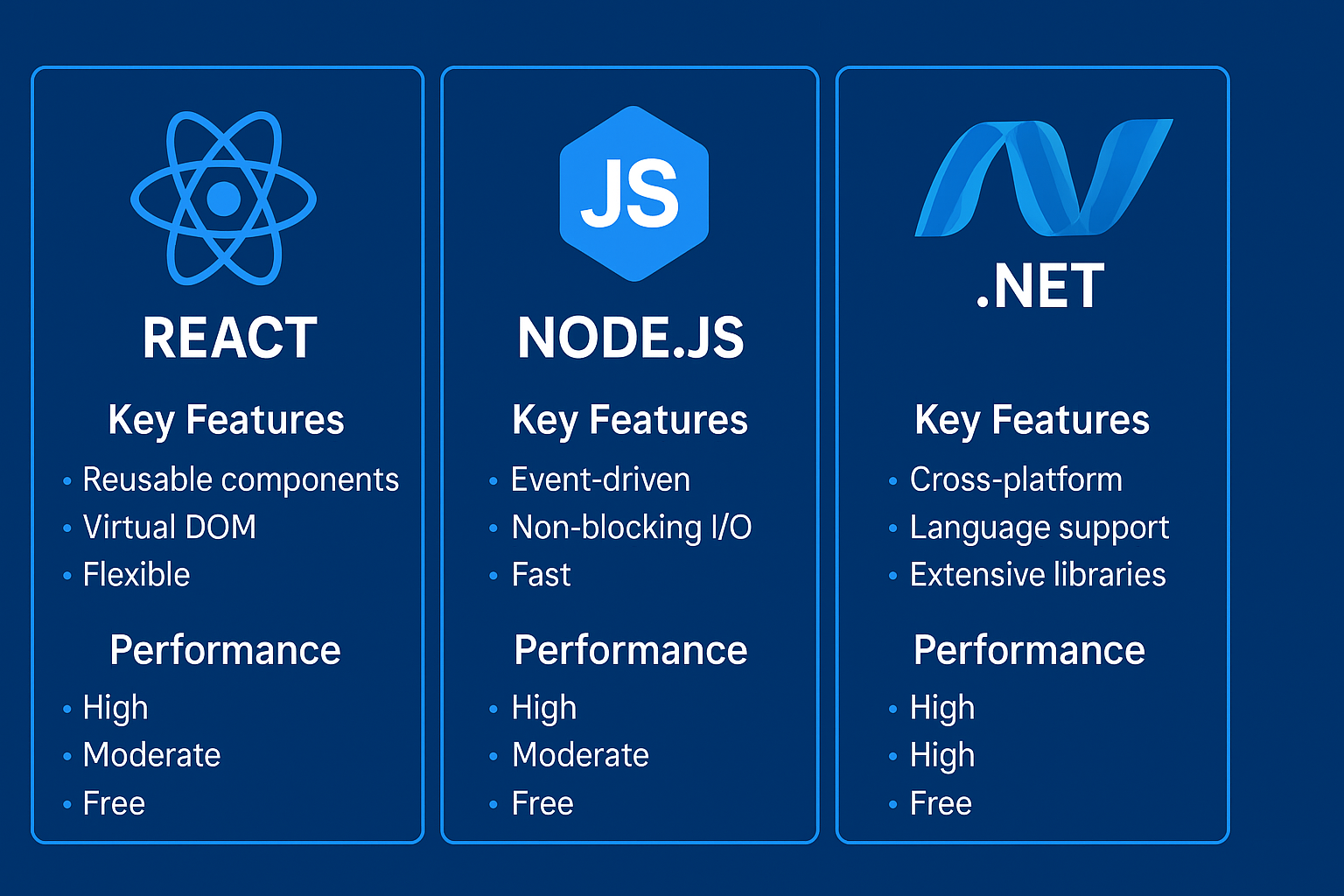

The team’s focus on providing dedicated resources for specific needs is a great way to ensure the right expertise on every project. Whether it’s an MVP or a large-scale solution, this approach seems like it would keep projects efficient and high-quality.

Thanks for sharing the detailed overview of WitQualis Technologies’ services and expertise. It’s clear that you offer a comprehensive range of development solutions, from frontend and backend technologies to full-stack and dedicated teams—really helpful for businesses looking to scale or outsource effectively. The structured approach to categorizing your offerings makes it easy to understand how you can support different project needs.

It is impressive to see such a comprehensive range of dedicated teams covering everything from full-stack solutions like MEAN and MERN to niche areas like Power BI and Azure. The emphasis on versatile staff augmentation for both frontend and backend needs highlights how crucial it is to build the right technical foundation for an MVP. This depth of specialization really demonstrates why having the right partners matters when tackling complex product development cycles.

The comprehensive breakdown of your dedicated tech teams, especially the specific coverage across front-end frameworks like MERN and back-end solutions like Python, really highlights the depth of technical expertise available. It’s clear that offering both MVP development and staff augmentation provides a flexible path for businesses at different stages of growth. This kind of structured approach to staffing certainly simplifies the process of finding the right specialized talent for complex projects.

It is impressive to see such a comprehensive breakdown of the dedicated development teams, especially the depth of expertise shown in both frontend frameworks like React and backend solutions spanning from .NET to Python. The inclusion of Product Design and MVP services alongside staffing options really highlights how well-versed WitQualis is in guiding startups from concept to execution. I appreciate the clear focus on bridging the gap between business consulting and technical implementation for clients.

It is impressive to see such a comprehensive breakdown of the technology stack, particularly the dedicated teams for both frontend frameworks like React and Vue and backend solutions including .NET and Python. The inclusion of specialized roles like Power BI consultants alongside staff augmentation services really highlights the depth of technical consulting available for product development. This structure clearly demonstrates how a single partner can support everything from MVP creation to full-scale enterprise solutions.