AI‑native refactoring: re‑imagining legacy modernisation in 2026

Legacy systems are no longer a “future problem.”

In 2026, they are front‑row centrepieces in board‑room discussions, product‑strategy roadmaps, and CTO‑level risk‑assessments.

Many enterprises still run core business logic on codebases built 10, 15, or even 20 years ago.

These systems are:

-

Hard to debug,

-

Painful to extend,

-

And expensive to maintain.

Traditional “manual refactoring” can’t keep pace with modern product‑velocity and AI‑driven innovation.

That’s where AI‑native refactoring steps in — not as a magic bullet, but as a structured, tool‑augmented evolution of how legacy systems are modernised.

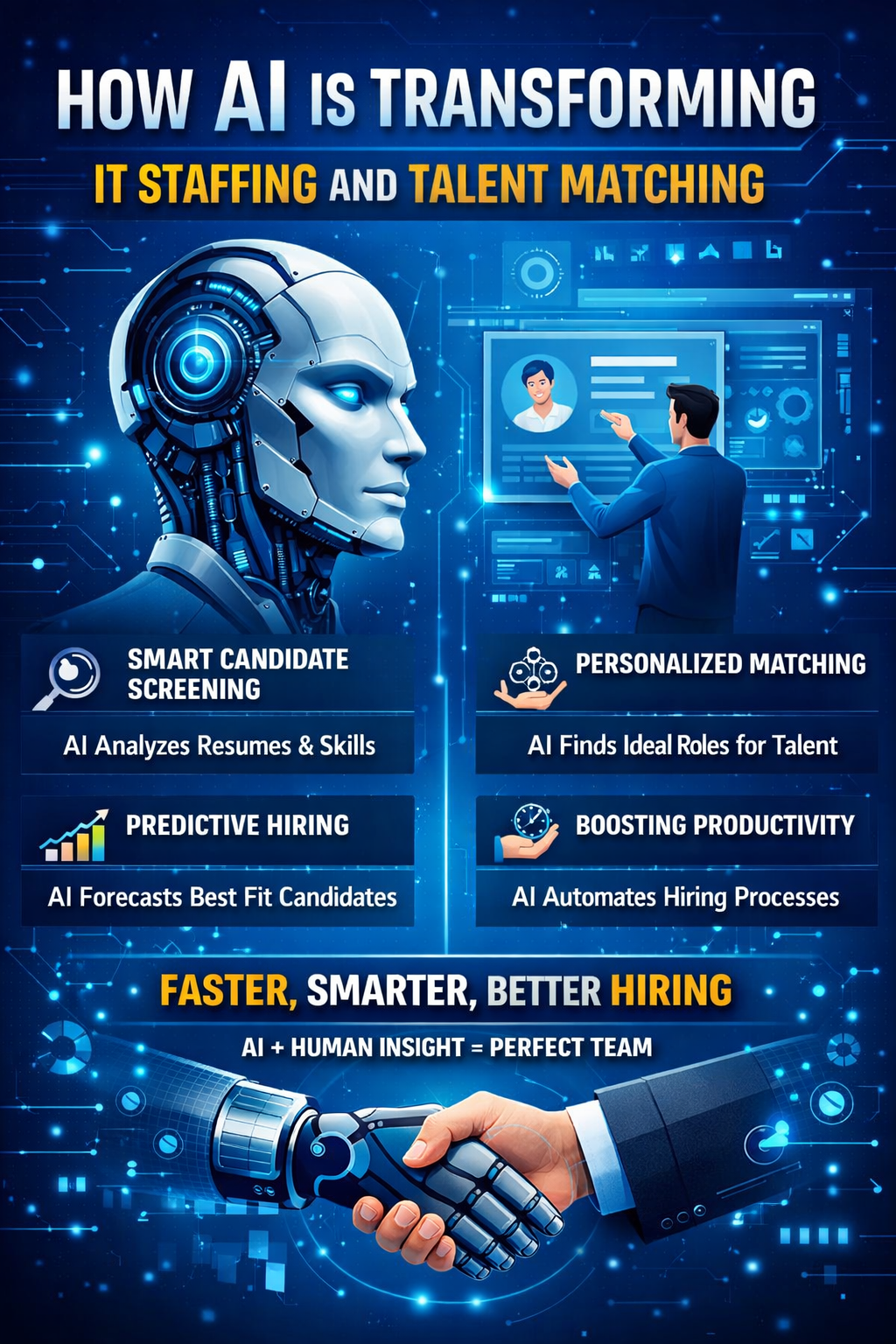

AI‑native refactoring uses AI‑driven analysis, code‑transformation, and pattern‑detection to:

-

Systematically map the current legacy codebase,

-

Identify technical‑debt hotspots,

-

And guide modernisation decisions at scale.

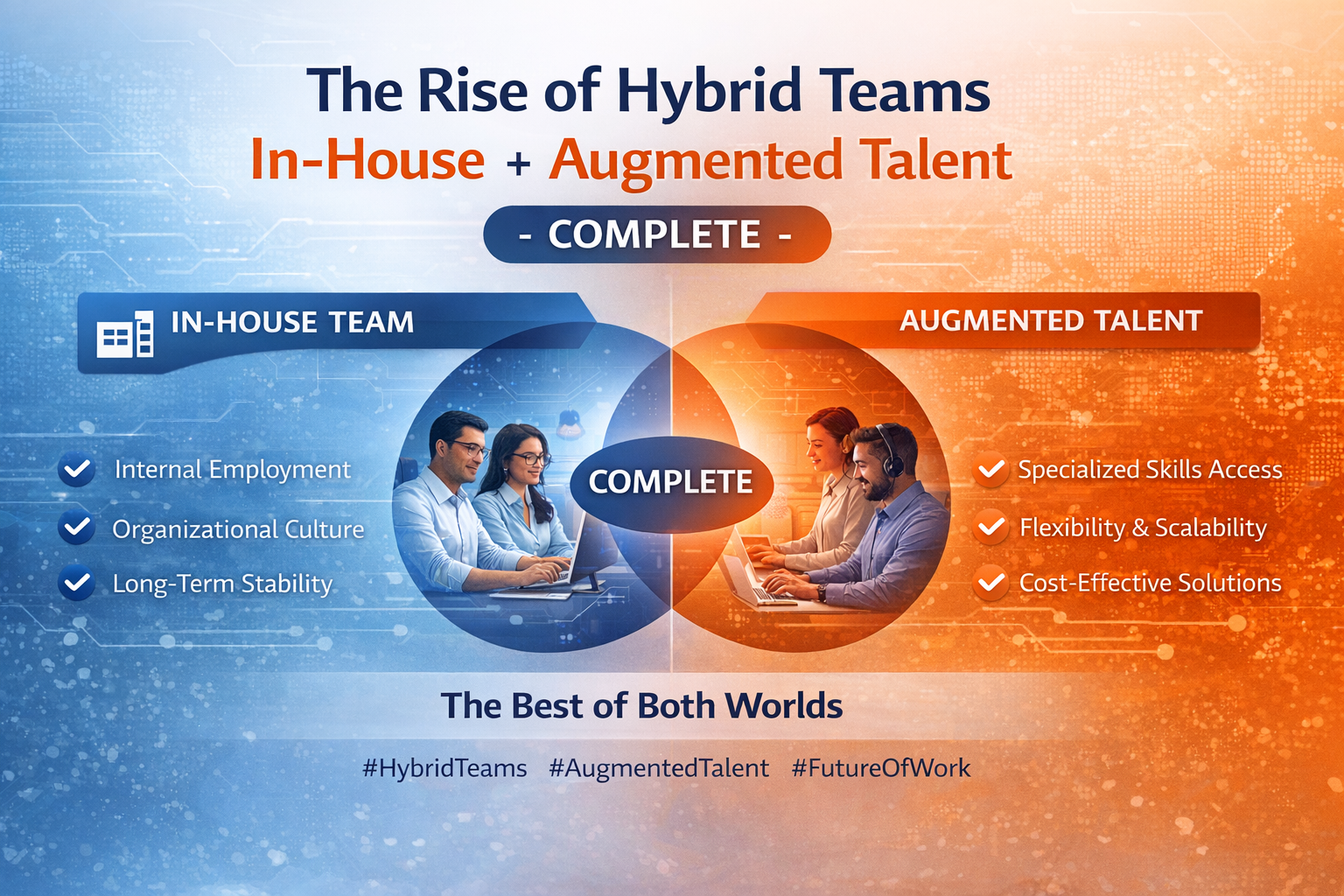

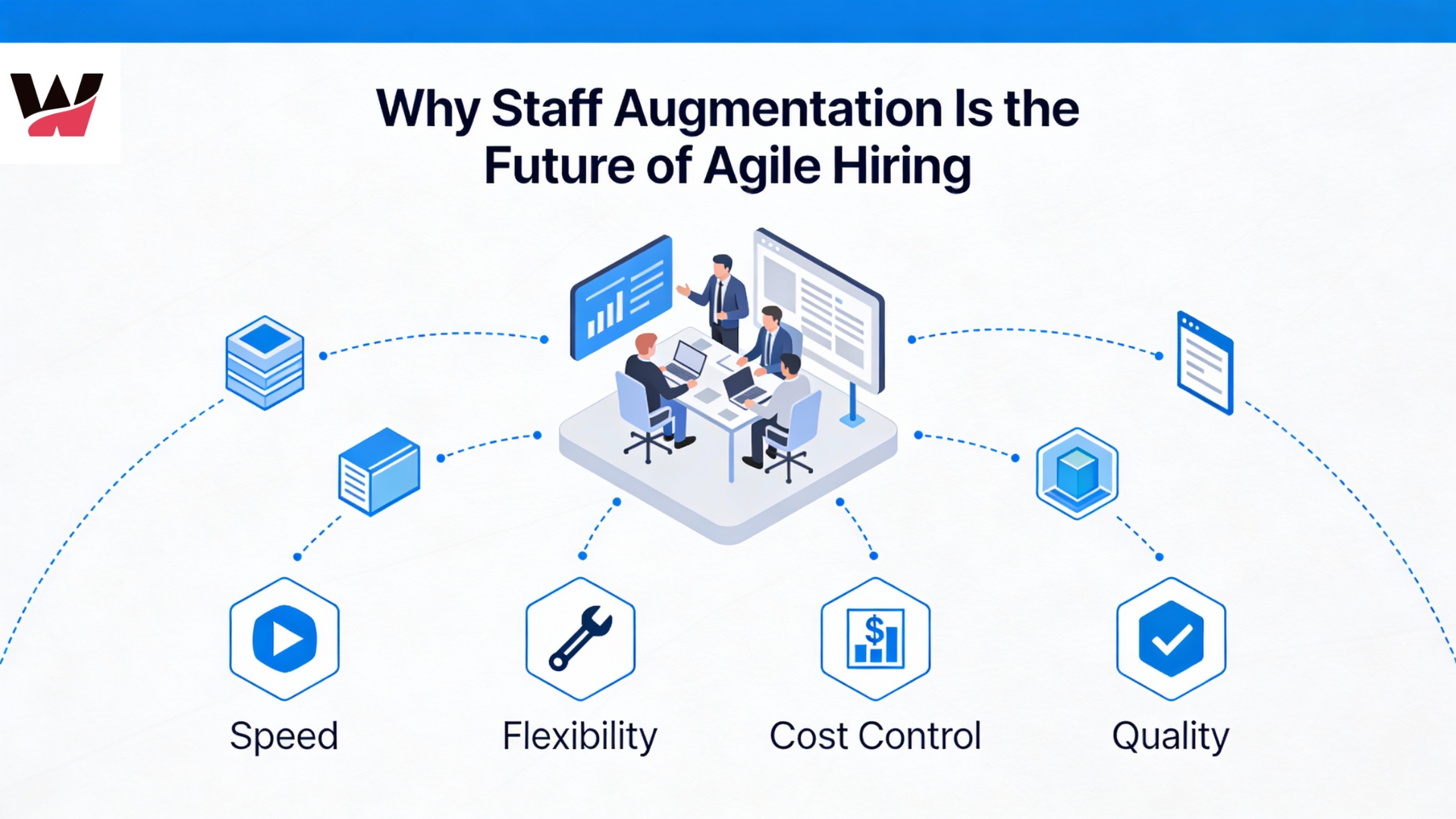

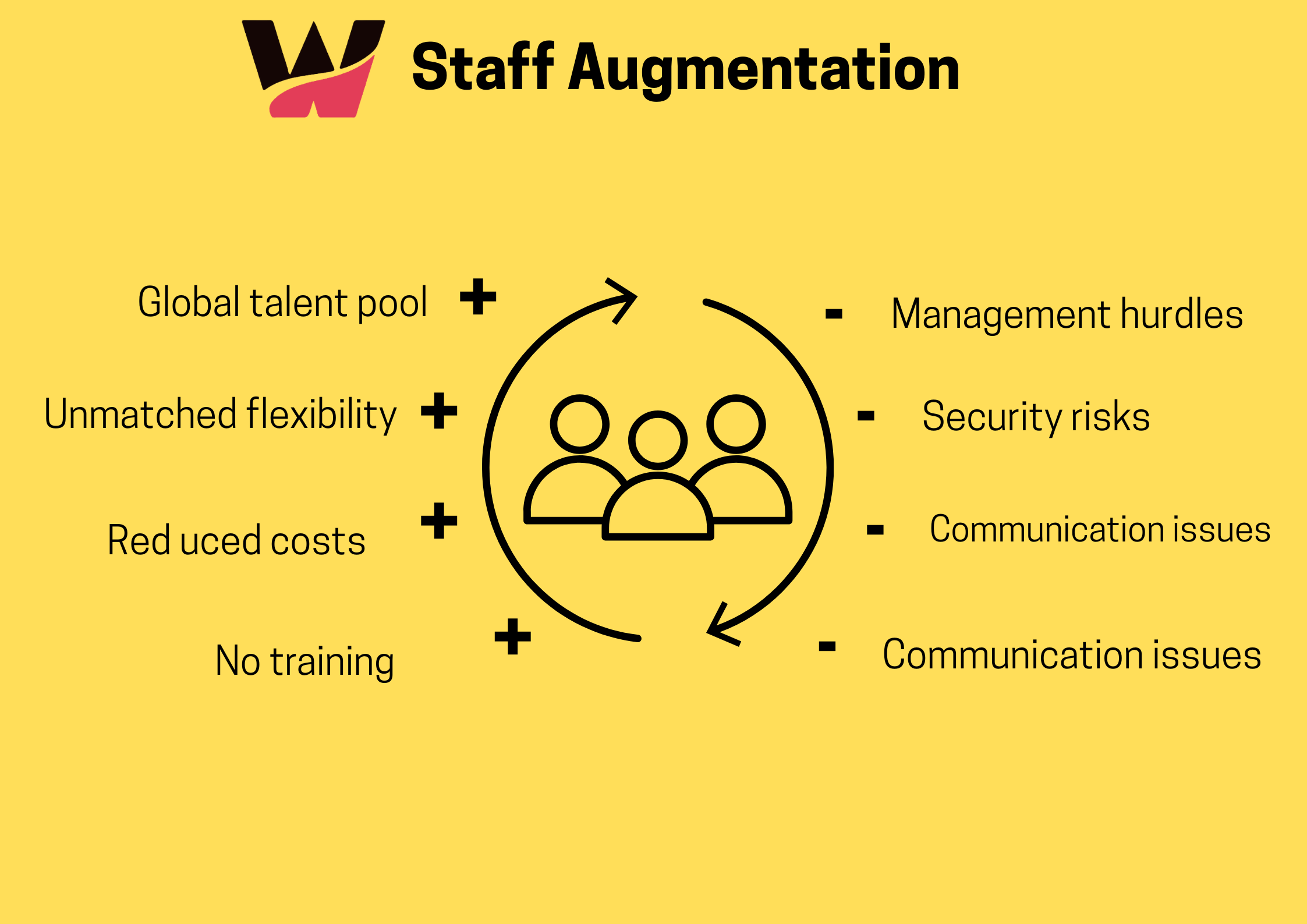

Behind the scenes, successful AI‑native refactoring is also powered by strategic IT staff augmentation and staff augmentation services.

Specialised AI‑native engineers, legacy‑modernisation experts, and cloud‑migration specialists are brought in via IT staff augmentation companies so that permanent teams can focus on product‑strategy while vendors handle the heavy‑lifting of modernisation.

Why legacy systems are a strategic problem in 2026

What “legacy” really means today

In 2026, a system is considered “legacy” not just because it is old, but because it:

-

Has tightly coupled components everyone is afraid to touch.

-

Uses outdated libraries, frameworks, or platforms with weak security and weak ecosystem support.

-

Has minimal or undocumented APIs, making it hard to integrate with modern SaaS or cloud services.

-

Depends on rare‑or‑retired skill sets (e.g., classic COBOL, bespoke scripting, expired vendor tools).

For CTOs, this is not only a technical risk — it is a business‑continuity and growth constraint.

Concrete pain points of legacy systems

-

High operational cost and slow delivery

-

Even small change‑requests require weeks of analysis and regression‑testing.

-

Onboarding new engineers is slow because domain knowledge is tribal, not documented.

-

-

Brittle integrations and shadow‑IT creep

-

New products and services are built on top of legacy systems via fragile scripts and one‑off connectors.

-

Workarounds become systems of record, deepening technical debt.

-

-

Security and compliance gaps

-

Many legacy systems cannot be easily patched or hardened.

-

Older frameworks and libraries expose organisations to known vulnerabilities.

-

-

Talent scarcity and knowledge‑loss risk

-

A handful of “institutional‑memory” engineers may be the only people who truly understand critical flows.

-

When these engineers retire or leave, outages and confusion spike.

-

Imagine a core billing platform that has been untouched for 15 years.

Not a single engineer on the current team fully understands the discount‑calculation engine, but the business can’t stop using it without risking revenue‑processing failures.

This is the typical legacy scenario that AI‑native refactoring aims to solve.

What is AI‑native refactoring?

AI‑native refactoring defined

AI‑native refactoring is the process of modernising legacy systems by:

-

Analysing the existing codebase with AI‑driven tools,

-

Generating refactoring recommendations, migration patterns, and code‑transformation scripts,

-

and then guiding human engineers and automated systems through a controlled, incremental rewrite or lift‑and‑modernise.

Unlike “classic” refactoring — where each change is driven manually by a developer — AI‑native refactoring scales patterns across thousands of files, surfaces hidden coupling, and helps prioritise technical‑debt work.

How it differs from traditional refactoring

In practice, AI‑native refactoring often looks like:

-

An AI‑driven tool scanning the codebase to surface duplicate logic, magic‑number‑bloat, hard‑coded dependencies, and security‑vulnerability‑prone code.

-

A catalogue of refactoring opportunities being generated (e.g., “factor out this shared logic into a service,” “decouple this module from that framework”).

-

Engineers then turning these AI‑generated recommendations into migration plans and incremental sprints.

How AI‑native refactoring works in practice

Phases of AI‑driven legacy modernisation

-

Inventory and mapping

-

The entire legacy codebase is ingested into an AI‑driven analysis platform.

-

Dependencies, modules, APIs, and data‑flows are mapped and visualised.

-

-

Technical‑debt and risk‑scan

-

AI tools flag:

-

Anti‑patterns,

-

Security vulnerabilities,

-

Undocumented interfaces,

-

and tightly‑coupled subsystems.

-

-

-

Refactoring‑pattern catalogue

-

A set of standard refactoring templates is created for:

-

Extracting services,

-

Replacing legacy frameworks,

-

Normalising data‑access patterns,

-

Introducing observability and logging.

-

-

-

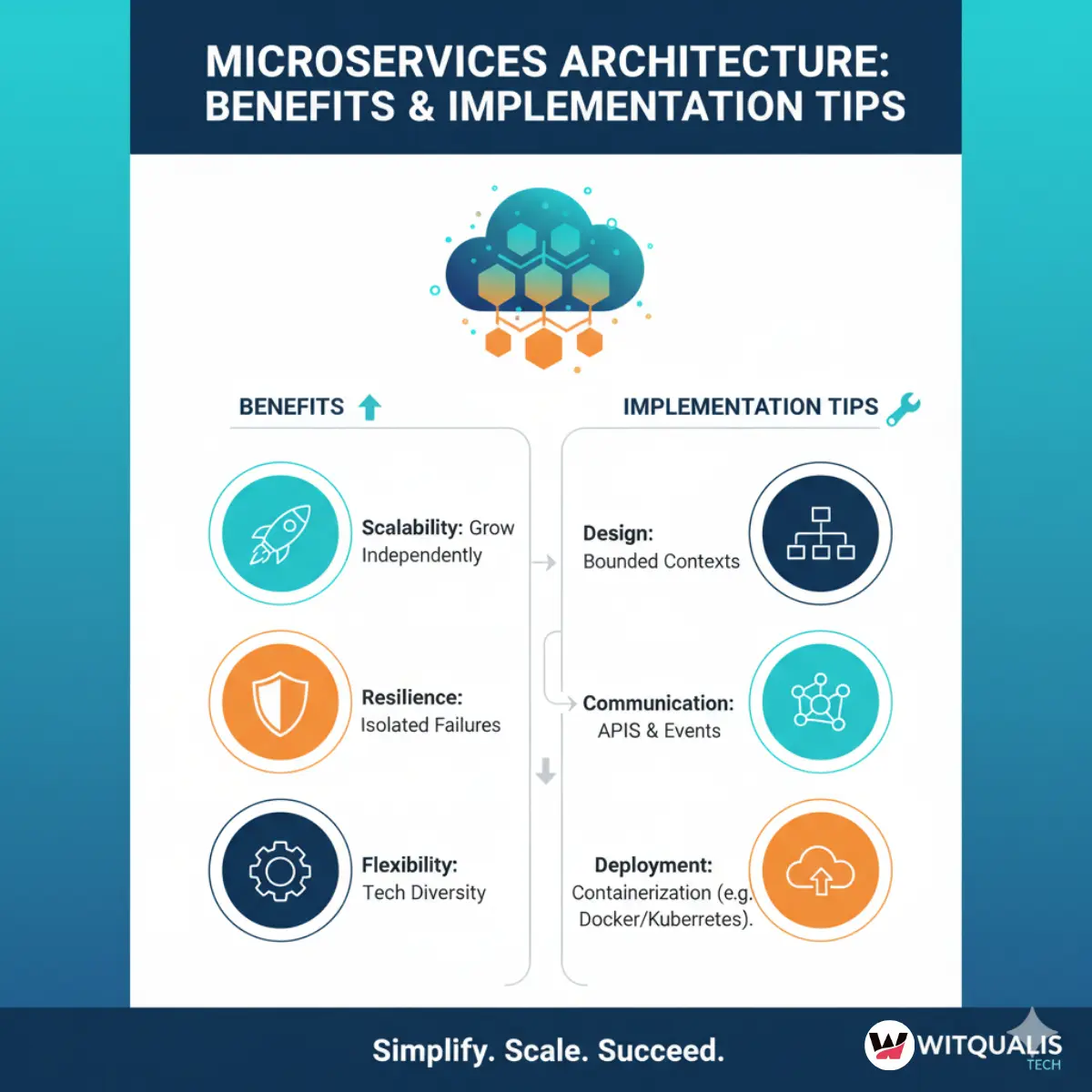

Incremental rewrite or micro‑service‑extraction

-

Engineers prioritise one or a few bounded‑context areas.

-

New, modern‑stack components are built around or beside legacy modules.

-

-

AI‑assisted testing and validation

-

AI tools help generate edge‑case test‑data,

-

Predict regressions,

-

and validate that business logic behaves the same after refactoring.

-

-

Operational stabilisation

-

Observability, monitoring, and alerting are layered on top of the modernised components.

-

The legacy system is gradually shrunk or booted out of the “core‑processing” path.

-

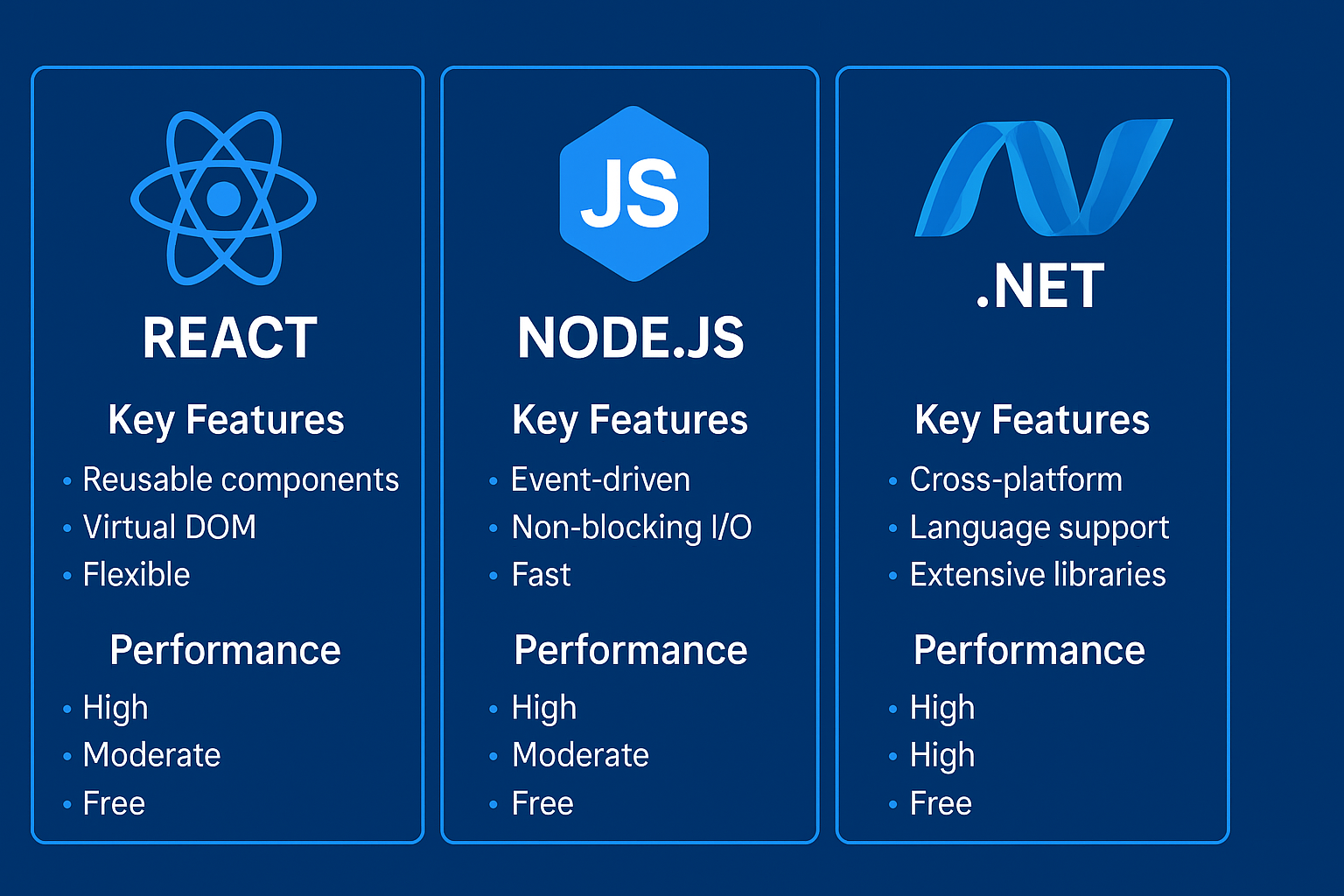

Tools and approaches commonly used

-

Static‑analysis and code‑graph‑analysis tools that visualise dependencies and cyclic references.

-

AI‑driven code‑analysis engines that detect repetitive patterns, code‑smells, and security‑bad‑practices.

-

Automated refactoring agents (scripts and bots) that perform repetitive, low‑risk changes in bulk.

-

Diff‑AI systems that compare old and new versions, predicting likely regressions and suggesting test‑cases.

For CTOs and product‑managers, the promise is clear: AI‑native refactoring does not replace engineers — it amplifies their impact and makes legacy‑modernisation feel less like a “forever‑project.”

Step‑by‑step: how to modernise legacy systems with AI‑native refactoring

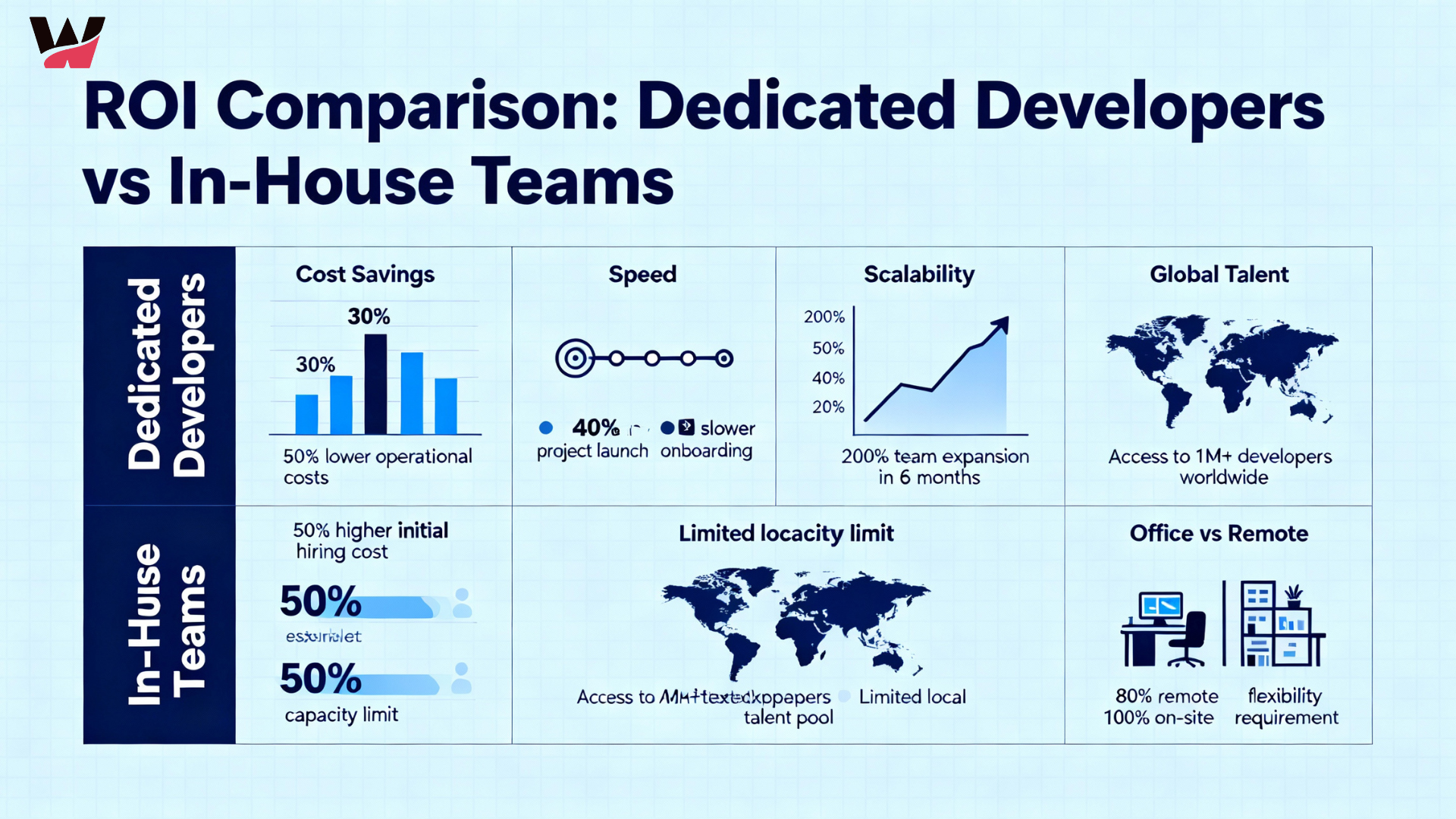

Step 1 – Define the business‑case and scope

Before code is even touched, AI‑native refactoring begins with strategy:

-

Which systems are mission‑critical and which are “nice‑to‑fix.”

-

What business‑outcomes are expected (e.g., lower downtime, faster feature‑delivery, lower maintenance‑cost).

At this stage, staff augmentation services often play a key role.

Organisations that lack internal experts in AI‑driven development and legacy modernisation may bring in AI‑native engineering teams via IT staff augmentation companies to:

-

Assess the current state,

-

Recommend refactoring strategies,

-

and help define a phased‑migration roadmap.

Step 2 – Map the codebase and data‑flows

-

The entire codebase is loaded into an AI‑driven code‑analysis platform.

-

All endpoints, APIs, database schemas, and configuration‑files are catalogued.

Visual dependency graphs are created, so teams can:

-

Identify “core” vs. “peripheral” modules,

-

Locate hidden integration points that are not documented.

This mapping is often repeated at regular intervals so the AI‑tools can detect drift and unexpected changes.

Step 3 – AI‑driven technical‑debt and risk identification

AI‑native refactoring tools:

-

Scan for code‑smells (e.g., god‑classes, long methods, duplicated blocks).

-

Flag security‑related patterns (e.g., hard‑coded credentials, unvalidated inputs).

-

Highlight areas with low test‑coverage or high‑churn‑but‑low‑documentation.

Engineers then:

-

Prioritise “hot‑spots” by business‑impact and risk,

-

Decide which modules will be re‑architected and which will be replaced outright.

Step 4 – Design AI‑assisted refactoring patterns

Instead of guessing how to change thousands of files, AI‑native refactoring relies on pattern‑based transformation:

-

Common patterns might include:

-

“Extract this shared logic into a service,”

-

“Replace this legacy data‑access layer with an ORM‑style abstraction,”

-

“Wrap these APIs in a facade so the legacy component can be swapped later.”

-

These patterns are encoded as scripts or templates so they can be applied consistently across the codebase.

Step 5 – Incremental execution and testing

Refactoring is done in small, safe increments:

-

One bounded‑context at a time,

-

One pattern at a time.

Automated tests are run before and after each change.

In AI‑driven environments, AI‑assisted testing tools:

-

Generate boundary‑case test‑data,

-

Predict likely regression points,

-

and help ensure that refactored components still behave correctly.

Step 6 – Observability and governance

Once modernised components are live:

-

Logs, metrics, and traces are standardised.

-

Key business‑level indicators (e.g., transaction latency, error‑rate) are monitored.

AI‑driven dashboards can:

-

Flag performance‑degradation after a refactoring sprint,

-

Suggest rollback or rollback‑points.

Benefits of AI‑driven refactoring for enterprises

Technical and business‑level benefits

-

Lower technical debt and faster delivery

-

Clearer architecture and dependencies reduce “time‑to‑understand” for new developers.

-

Delivery‑velocity increases as the system becomes less fragile.

-

-

Improved security and compliance posture

-

Known‑vulnerable patterns and outdated libraries are systematically identified and replaced.

-

Audit‑trails and observability make it easier to demonstrate compliance.

-

-

Higher engineer‑productivity and morale

-

Working on a cleaner, better‑structured codebase is less stressful and more rewarding.

-

Onboarding is faster because dependencies and flows are better documented (often with AI‑generated docs).

-

-

Scalability and future‑proofing

-

Modernised systems can be more easily integrated with AI‑driven features, cloud‑services, and SaaS products.

-

Growth‑driven changes (e.g., adding new markets, payment‑gateways, or regulatory‑flows) become less painful.

-

Imagine a legacy insurance‑policy platform that has been AI‑natively refactored.

New underwriting rules can be added in days instead of weeks, and every new developer can understand the core logic in a fraction of the time that was required before.

Risks and challenges (and how to mitigate them)

Common risks in AI‑native refactoring

-

Over‑automation and loss of human judgment

-

Not every refactoring suggestion from an AI tool is safe or desirable.

-

Business‑logic nuances may be missed if engineers treat AI outputs as commands instead of guidance.

-

-

Regression and unintended side‑effects

-

Aggressive pattern‑based refactoring can unexpectedly change behavior.

-

Business‑critical workflows may break if edge‑cases are overlooked.

-

-

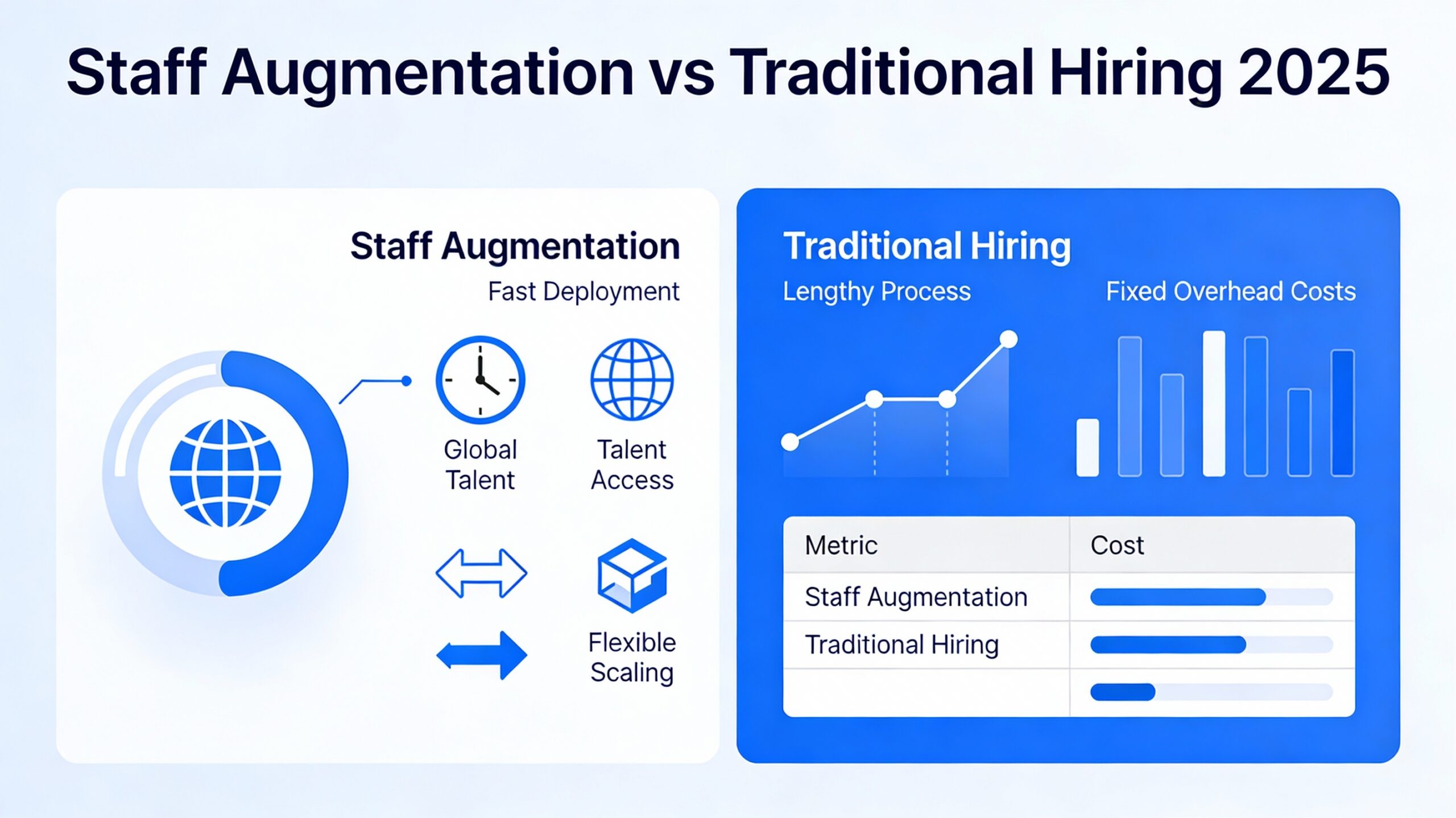

Knowledge‑transfer and ownership gaps

-

If refactoring is done by temporary experts (via staff augmentation), permanent teams may not fully understand the new architecture.

-

How to mitigate these risks

-

Keep humans in the loop

-

AI‑tools should be treated as “advisors,” not “autonomous‑refactoring bots.”

-

Critical changes should be peer‑reviewed and step‑by‑step‑approved.

-

-

Invest in testing and observability

-

Comprehensive test‑suites, both unit and integration‑level, should be maintained.

-

Real‑time monitoring should be in place before and after refactoring.

-

-

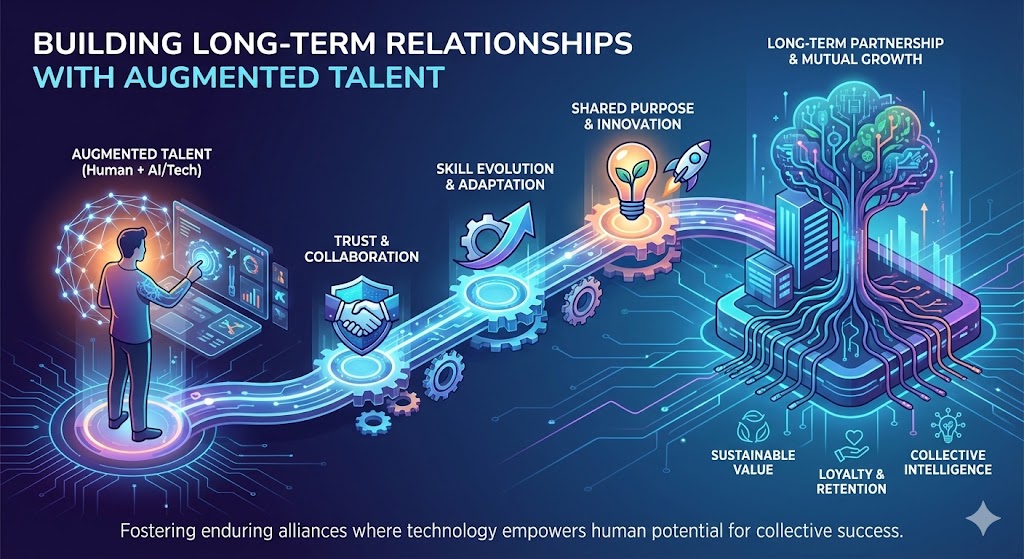

Structure staff augmentation as a knowledge‑building engagement

-

When IT staff augmentation companies provide AI‑native engineers, the engagement should be designed to transfer skills and understanding to internal teams.

-

Pair‑programming, documentation‑sprints, and post‑sprint knowledge‑handover sessions are highly effective.

-

Case‑style examples and scenarios

Example 1 – Banking core‑processing legacy system

A commercial bank runs its core‑transaction‑processing on a 20‑year‑old monolith.

The business is:

-

Unable to add new payment‑channels quickly,

-

Constantly worried about outage‑risk during peak‑load periods.

An AI‑native refactoring engagement is launched:

-

The AI‑analysis phase maps transaction‑lifecycle, data‑stores, and integrations.

-

Technical‑debt hotspots (e.g., a single massive “transaction‑engine” class) are flagged.

-

A phased‑refactoring plan is created, with high‑risk payment‑flows staying legacy‑compatible behind a modern‑API‑layer.

Over 18 months, the core‑system is gradually modernised, with AI‑driven tools:

-

Helping to extract services,

-

Guiding tests,

-

and ensuring that all business‑level SLAs are preserved.

Example 2 – E‑commerce platform with legacy tax‑engine

An e‑commerce platform has a legacy tax‑computation engine that is hard to maintain.

New markets and VAT rules cannot be added quickly enough.

AI‑native refactoring is used to:

-

Decompose the tax‑engine into a rule‑based service,

-

Replace handwritten logic with a configurable rules‑engine schema,

-

And keep the legacy implementation as a fallback for a short transition.

The result is:

-

Faster time‑to‑market for new markets,

-

Lower risk of tax‑calculation errors,

-

and a cleaner integration surface for future AI‑driven pricing or promotional engines.

These examples are not theoretical — they mirror real‑world legacy application modernisation initiatives that are being executed with AI‑native tools in 2026.

How staff augmentation services fit into AI‑native refactoring

Staff augmentation for AI‑driven development

AI‑native refactoring is not a one‑team‑task.

It requires:

-

AI‑engineers and data‑scientists who can work with code‑analysis tools and AI‑driven refactoring patterns.

-

Legacy‑modernisation specialists who understand old frameworks and design patterns.

-

Cloud‑engineers to design and implement modern‑architecture foundations.

Most organisations cannot or should not hire all of this talent permanently.

That’s where staff augmentation services become essential.

Through IT staff augmentation companies, CTOs can:

-

Temporarily scale up AI‑native engineers, refactoring‑specialists, and cloud‑migration experts.

-

Embed them into existing product and engineering teams.

-

Release or redeploy them once the platform is stabilised.

Staff augmentation process aligned with AI‑native refactoring

A well‑run staff augmentation process for AI‑driven legacy‑modernisation projects typically includes:

– Needs assessment and scope‑definition

-

Business and product teams define:

-

Which systems are priority candidates for AI‑native refactoring.

-

What business‑outcomes are expected (e.g., reduced downtime, faster feature‑delivery).

-

-

Engineering and architecture teams assess:

-

Current stack, risk‑surface, and data‑flow complexity.

-

– Role definition and sourcing

-

Required skills are mapped:

-

AI‑engineers with experience in code‑analysis and AI‑driven refactoring,

-

Legacy‑modernisation experts,

-

Cloud‑architects and DevOps engineers.

-

-

IT staff augmentation companies are engaged to:

-

Source and vet specialists with relevant experience.

-

Ensure they can work under the client’s governance and tools.

-

– Embedded delivery and AI‑native refactoring

-

Augmented staff are embedded into product and engineering squads.

-

They work alongside permanent teams to:

-

Run AI‑driven code‑analysis,

-

Design and execute refactoring patterns,

-

Build modern‑architecture foundations.

-

– Testing, validation, and go‑live

-

AI‑driven testing tools help validate regressions and edge‑cases.

-

Real‑world traffic‑testing and observability ensure that modernised components behave correctly.

Leave a Reply