How to Ensure Code Quality When Working with Augmented Teams

Why Code Quality Becomes Critical With Augmented Teams

Because augmented professionals are temporary, there is a natural tendency to assume quality will suffer: they don’t understand the codebase, they lack institutional knowledge, they won’t be around to maintain what they build. Consequently, many organizations are skeptical about quality when using augmented talent. Therefore, explicitly addressing quality concerns is essential for successful augmentation.

Moreover, the opposite is often true. When organizations implement strong quality practices, augmented teams often produce higher-quality code than internal teams. Why? Because clear standards, explicit expectations, and rigorous review create accountability that sometimes lacks in permanent teams. Furthermore, external professionals are often highly skilled and motivated to deliver excellent work that builds their reputation.

Additionally, code quality is not something that magically emerges—it results from deliberate practices, clear standards, and consistent enforcement. Consequently, organizations with strong quality cultures maintain high standards with augmented talent. Those without strong cultures struggle regardless of whether talent is permanent or external.

Significantly, the question is not “Can augmented teams produce quality code?” but rather “Does our organization have strong enough quality practices?” Thus, using augmented teams exposes whether quality practices are truly embedded.

The Foundation: Clear Quality Standards and Expectations

Because augmented professionals cannot read minds, explicitly defining quality expectations is foundational. Consequently, organizations should document: What does “good” look like for this codebase? What are non-negotiables? What are preferences?

Furthermore, quality standards should cover multiple dimensions:

Code Style: Naming conventions, formatting, structure. For example, “We use camelCase for variables, PascalCase for classes, and follow the Google style guide.” Therefore, clarity prevents subjective disagreements.

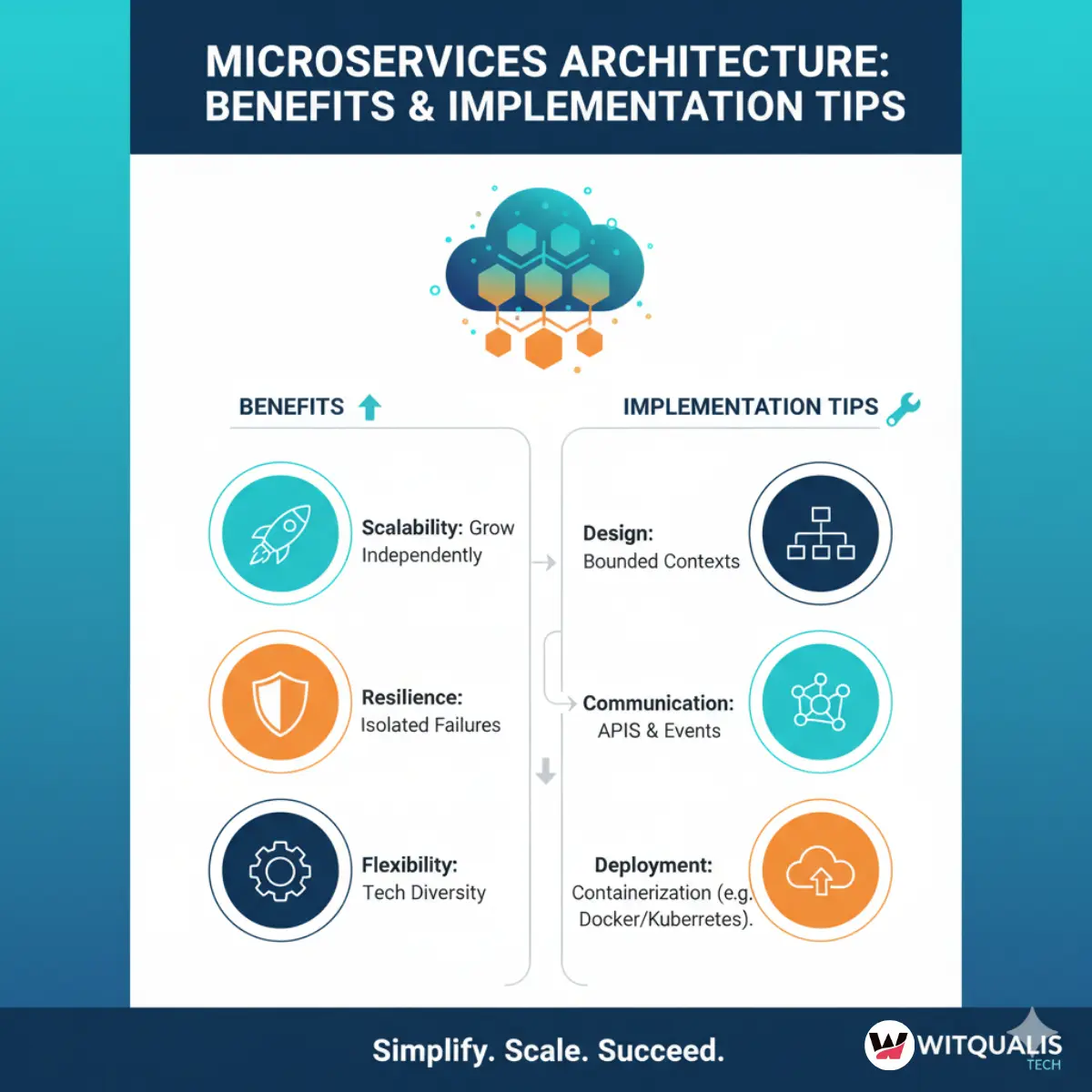

Architecture Principles: How should systems be designed? For example, “We favor microservices, use event-driven architecture for integrations, and separate concerns between services.” Therefore, augmented developers understand the architectural philosophy.

Testing Standards: How much testing is required? For example, “All business logic must have unit tests (>80% coverage), all integrations must have integration tests, all APIs must have contract tests.” Therefore, testing expectations are explicit.

Performance Expectations: What performance is acceptable? For example, “API endpoints must respond within 200ms under normal load, database queries must complete within 100ms.” Therefore, performance is a defined requirement, not an afterthought.

Security Standards: What security practices are mandatory? For example, “All user inputs must be sanitized, all secrets must use environment variables, all external APIs must use authentication.” Therefore, security is built-in by default.

Documentation Requirements: What documentation must accompany code? For example, “Complex logic requires inline comments, all public APIs require documentation, all architectural decisions require decision records.” Therefore, knowledge is captured.

Moreover, these standards should be codified in documents that are accessible to all team members. Therefore, augmented professionals can reference standards without constant questions.

Additionally, standards should be enforced through tooling where possible. For example, linters, code formatters, and test coverage tools automate enforcement. Therefore, enforcement becomes objective rather than subjective.

Significantly, clear standards are the foundation of consistent quality. Thus, investing in standard definition pays enormous dividends.

Automated Quality Checks: The First Line of Defense

Because manual review is slow, expensive, and inconsistent, automated quality checks should be the first line of defense. Consequently, organizations should invest in tooling that catches quality issues before human review even occurs.

Continuous Integration and Automated Testing

Because every code change should be tested before merging, strong CI/CD pipelines are essential. Consequently, organizations should implement:

Automated test execution: Every pull request automatically runs all tests. If tests fail, code cannot merge. Therefore, broken changes are caught immediately.

Code coverage analysis: Tools measure what percentage of code is tested. Organizations should define minimum acceptable coverage (typically 70–80%) and fail builds if coverage drops. Therefore, testing discipline is enforced.

Performance testing: Automated tests should verify that changes don’t degrade performance. For example, “If this endpoint’s latency increases by >10%, the build fails.” Therefore, performance regressions are caught.

Security scanning: Tools analyze code for security vulnerabilities—SQL injection, hardcoded secrets, unsafe dependencies. Therefore, security issues are caught before production.

Moreover, CI/CD pipelines should run consistently for all code, whether from permanent or augmented developers. Therefore, no special exceptions for external talent.

Additionally, failing builds should block merges. For example, even if a senior engineer wants to merge failing tests, the system prevents it. Therefore, standards are enforced uniformly.

Significantly, strong CI/CD is the equalizer: it ensures quality regardless of who wrote the code. Thus, investment in CI/CD infrastructure pays enormous dividends.

Static Analysis and Linting

Because many style and structural issues can be caught automatically, tools that perform static analysis should be integrated into development workflows. Consequently, developers get immediate feedback on style violations or potential bugs before code review even occurs.

Furthermore, these tools should be configured to match organizational standards. For example, ESLint for JavaScript should be configured with company conventions. Therefore, enforcement becomes automated rather than manual.

Additionally, violations should fail builds or block commits. Therefore, developers are forced to comply before proceeding.

Moreover, these tools should provide clear error messages and suggestions for fixes. Therefore, developers (especially augmented professionals new to the codebase) get guidance on how to meet standards.

Significantly, static analysis moves quality enforcement from manual review to automation. Thus, it dramatically reduces review time and improves consistency.

Dependency Management and Vulnerability Scanning

Because external dependencies are source of security vulnerabilities and technical debt, managing them carefully is essential. Consequently, tools should automatically scan dependencies for known vulnerabilities and alert developers.

Furthermore, organizations should define policies around dependency updates. For example: “Security updates must be applied immediately; minor version updates should be applied monthly; major version updates require testing and approval.” Therefore, dependency management is systematic.

Moreover, license scanning ensures that dependencies comply with organizational standards. For example, “We cannot use GPL-licensed dependencies.” Therefore, compliance is enforced.

Significantly, automated dependency management prevents security and licensing issues from slipping through. Thus, it is essential quality practice.

Code Review Practices Optimized for Augmented Teams

Because code review is where much quality control happens, implementing code review practices that work well with augmented teams is critical. Consequently, organizations should establish clear review processes.

Clear Review Criteria and Checklists

Because review quality depends on reviewers understanding what to look for, explicit review checklists are valuable. Consequently, organizations should define: What should reviewers check?

For example, a code review checklist might include:

-

Does the code follow our style and architecture standards?

-

Are there tests? Do they cover the happy path and error cases?

-

Are performance implications considered?

-

Are there security concerns?

-

Is the code understandable? Are complex sections documented?

-

Are there obvious bugs or logic errors?

-

Does this integrate well with surrounding code?

Furthermore, checklists should be specific to the organization and codebase. Therefore, reviewers are guided on what matters most.

Moreover, checklists reduce cognitive load on reviewers. Therefore, reviews are more consistent and thorough.

Significantly, structured review processes improve quality. Thus, investment in review practices pays dividends.

Pairing Permanent and Augmented Team Members

Because augmented professionals are new to the codebase, pairing them with permanent team members during complex work improves quality and speeds learning. Consequently, organizations should assign mentors or pair partners to augmented developers working on critical systems.

Furthermore, pairing provides multiple benefits:

Knowledge transfer: Permanent team members explain codebase context, architectural decisions, and why things were built certain ways. Therefore, augmented professionals understand not just “what” but “why.”

Quality improvement: Two people reviewing decisions in real-time catch issues earlier than individual work followed by review. Therefore, quality improves.

Faster ramping: Augmented professionals ramp faster when guided by experienced mentors. Therefore, productivity increases.

Relationship building: Working together builds relationships and trust. Therefore, team cohesion improves.

Moreover, pairing should be particularly intensive during the first week, then taper as the person becomes comfortable. Therefore, investment is proportional to need.

Significantly, strategic pairing dramatically improves augmented team outcomes. Thus, it is worth investment.

Synchronous Code Review With Time Zone Overlap

Because asynchronous code review can create delays and miscommunications, synchronous review with real-time discussion is preferable when possible. Consequently, organizations should schedule review sessions during timezone overlaps where possible.

Furthermore, synchronous review has advantages:

Immediate feedback: Instead of waiting hours for written comments, feedback is immediate and interactive. Therefore, issues are resolved faster.

Clarification: If a reviewer doesn’t understand something, they can ask immediately. Therefore, miscommunications are resolved.

Learning: Discussing decisions in real-time provides learning opportunities. Therefore, both reviewer and author learn.

Relationship: Face-to-face (or video) discussion builds relationships better than written comments. Therefore, team cohesion improves.

Moreover, for critical code, synchronous review is worth the timezone coordination effort. For routine changes, asynchronous review may be acceptable.

Significantly, high-quality review requires real-time discussion when possible. Thus, timezone coordination is worth investment for critical work.

Clear Feedback and Actionable Comments

Because augmented professionals are new to the codebase and team, review feedback should be especially clear and constructive. Consequently, reviewers should provide:

Specific comments: Instead of “This is not good,” say “This variable name is unclear because it doesn’t indicate what data it holds. Consider naming it ‘userAuthToken’ instead of ‘token.'” Therefore, the author understands exactly what to change.

Explanation: Explain why something is a concern. For example, “This pattern could cause race conditions because of concurrent access. We use a lock here instead.” Therefore, the author learns the reasoning.

Suggestions: Offer specific improvements. For example, “Consider using a Promise.all() here for better performance.” Therefore, the author has concrete direction.

Encouragement: Acknowledge good work. For example, “I like how you structured this error handling—it’s clear and handles edge cases well.” Therefore, the author feels appreciated.

Moreover, feedback should be proportional to the issue’s severity. Major architectural issues require detailed discussion. Minor style issues might just need a pointer to style guides.

Significantly, constructive, specific feedback accelerates learning and improves code quality. Thus, feedback quality matters enormously.

Knowledge Documentation and Codebase Health

Because augmented professionals cannot access institutional knowledge through osmosis, documenting knowledge is essential. Consequently, organizations should invest in documentation that helps augmented professionals quickly understand systems.

Architecture Decision Records (ADRs)

Because understanding why decisions were made is as important as understanding what was built, maintaining architecture decision records is valuable. Consequently, organizations should document major architectural decisions: What decision was made? What were the alternatives? Why was this chosen? What are the implications?

Furthermore, ADRs become onboarding material for augmented professionals. For example, “Why does this system use event-driven architecture instead of RPC?” is answered in the ADR. Therefore, augmented professionals quickly understand the philosophy.

Moreover, ADRs prevent re-litigation of decisions. For example, “Should we switch to a different database?” is answered by reviewing why the current one was chosen. Therefore, valuable discussion is preserved.

Significantly, ADRs are valuable knowledge assets. Thus, maintaining them is investment in organizational capability.

Clear Code Comments and Documentation

Because complex logic is difficult to understand without context, good inline comments and documentation are essential. Consequently, code comments should explain “why,” not just “what.”

For example:

-

Bad comment:

// Calculate the total(the code already shows it’s a total) -

Good comment:

// Calculate total including tax, but exclude shipping cost because it's handled separately in checkout(explains the reasoning)

Furthermore, public APIs and complex functions should have documentation. For example, JSDoc for JavaScript, docstrings for Python. Therefore, developers quickly understand interfaces.

Moreover, README files should explain how systems work, how to run them, and how to contribute. Therefore, augmented professionals can get started without constant questions.

Significantly, good documentation dramatically reduces onboarding time for augmented professionals. Thus, investment in documentation pays dividends.

Regular Architecture Reviews

Because systems evolve and can drift from their original design, regular architecture reviews keep systems clean. Consequently, organizations should periodically (quarterly or semi-annually) review major systems with augmented and permanent team members.

Furthermore, these reviews should address:

-

Are we still adhering to our architectural principles?

-

Are there areas where debt has accumulated?

-

Should we refactor anything?

-

Are there performance or security concerns?

Moreover, involving augmented professionals in reviews provides them perspective on the system and gives the team fresh viewpoints. Therefore, reviews are more thorough.

Significantly, regular reviews maintain system health and prevent architectural drift. Thus, investment in reviews is preventive maintenance.

Clear Accountability and Ownership Models

Because code quality depends on clear ownership and accountability, explicitly defining who is responsible for what is important. Consequently, organizations should establish clear ownership models.

Code Ownership and Review Authority

Because decisions about code quality ultimately rest with someone, clear ownership prevents ambiguity. Consequently, organizations should define: Who owns each system? Who can approve merges?

Furthermore, ownership should typically rest with permanent team members who will maintain the system long-term. Augmented professionals contribute under their guidance. Therefore, permanent owners maintain standards.

Moreover, review authority should be clear. For example: “Merges to this system require approval from the system owner or a senior engineer.” Therefore, standards are enforced.

Significantly, clear ownership prevents quality from becoming “no one’s responsibility.” Thus, defining ownership is essential.

Escalation Paths for Quality Issues

Because sometimes quality disagreements arise, clear escalation paths prevent them from blocking progress. Consequently, organizations should define: If a reviewer and author disagree about code quality, who makes the final decision?

Furthermore, escalation paths might be: “If disagreement persists, escalate to the tech lead.” Therefore, disputes are resolved quickly rather than blocking work.

Moreover, escalation decisions should be documented and communicated. Therefore, learning occurs and future disagreements are prevented.

Significantly, clear escalation paths keep code flowing while maintaining standards. Thus, having them prevents stagnation.

Metrics and Monitoring of Code Quality

Because what gets measured gets managed, tracking code quality metrics helps maintain standards. Consequently, organizations should monitor metrics like test coverage, defect rates, and performance.

Test Coverage Metrics

Because tests are foundational to quality, monitoring test coverage is essential. Consequently, organizations should track: What percentage of code is covered by tests?

Moreover, coverage should be monitored by component or service. Therefore, organizations see which parts are well-tested and which have gaps. Furthermore, trends should be monitored: Is coverage improving or declining?

Significantly, coverage trends indicate team health. Rising coverage suggests commitment to quality; declining coverage suggests corners being cut.

Defect Rates and Bug Escape

Because some bugs escape to production despite review, tracking which code has high defect rates reveals quality issues. Consequently, organizations should analyze: Which components have high defect rates? Which developers’ code has more bugs?

Furthermore, this data should drive action. For example, if a particular system has high defect rates, it might need refactoring or additional testing. If certain developers consistently ship bugs, they might need additional mentoring.

Moreover, comparing defect rates between augmented and permanent developers can reveal whether quality is actually suffering. For example, if augmented developers have similar or lower defect rates, that proves quality isn’t compromised.

Significantly, data-driven quality management is far more effective than assumptions. Thus, measuring and analyzing is essential.

Performance Monitoring

Because performance regressions are common, monitoring performance metrics helps catch issues before customers experience problems. Consequently, organizations should track: What are the performance characteristics of critical systems? Are they degrading over time?

Furthermore, performance monitoring should compare commits. For example, “Did this change degrade performance?” Therefore, performance is explicitly managed.

Moreover, performance should be part of the definition of done. For example, “This feature must have <100ms latency.” Therefore, performance expectations are explicit.

Significantly, proactive performance monitoring prevents customer-facing issues. Thus, investment in monitoring is worthwhile.

Building a Quality Culture With Augmented Teams

Because ultimately code quality depends on culture and values, intentionally building a quality culture is essential. Consequently, organizations should:

Value quality explicitly: In meetings, documentation, and conversations, emphasize that quality matters. Therefore, it becomes a shared value.

Celebrate quality work: Acknowledge when code is particularly well-written, when someone goes above and beyond on testing, or when bugs are caught through good review. Therefore, quality is rewarded.

Invest in quality: Allocate time for refactoring, testing, documentation. Don’t let quality become an afterthought. Therefore, quality is treated as a priority.

Learn from failures: When bugs escape or quality issues occur, investigate and learn rather than blame. Therefore, continuous improvement becomes the culture.

[…] How to Ensure Code Quality When Working with Augmented Teams – WitQualis […]